OpenAI strikes back—GPT-5.5, ChatGPT Images 2.0, and more - Sync #568

Plus: Mythos leaked; DeepSeek V4; Anthropic gets more money and more compute; SpaceX to acquire Cursor; robots beat humans at Beijing half-marathon; Kimi K2.6; Qwen3.6-Max; and more!

Hello and welcome to Sync #568!

This week, OpenAI returned with GPT-5.5, ChatGPT Images 2.0, and a couple more new features that lay the groundwork for its super app. We take a closer look at what OpenAI has brought to the table in this week’s issue of Sync.

Elsewhere in AI, an unauthorised group gained access to Claude Mythos, but this did not prevent Anthropic from securing additional multibillion-dollar deals and more computing power. Meanwhile, Cursor joins SpaceX, DeepSeek releases its long-awaited flagship model, the US accuses China of “industrial-scale” campaigns to steal AI secrets, and Anthropic explains why Claude Code has had so many issues in recent weeks.

In robotics, humanoid robots have beaten humans at the Beijing half-marathon, Sony’s robot has bested elite human table tennis players, and Unitree G1 tries wheels instead of feet.

This week’s issue of Sync also features: what humanity might look like in the year 1 million A.D.; a beanie that can read your brain waves; Colossal Biosciences says it has cloned red wolves; Tim Cook stepping down as CEO of Apple; what Yann LeCun is working on; and more.

Enjoy!

OpenAI strikes back—GPT-5.5, ChatGPT Images 2.0, and more

OpenAI looked like it was on the ropes. Anthropic, propelled by the surge in popularity of Claude Code and a laser focus on enterprise customers, grew so aggressively that OpenAI raised a red alert not once but twice this year. Then, in mid-April, Anthropic announced Claude Mythos, a model with cybersecurity capabilities so potent that it spooked bankers and CEOs, followed days later by Claude Opus 4.7. The picture was clear: Anthropic was on a roll, and OpenAI was being left behind.

Last week, OpenAI struck back. Between Monday and Thursday, the company shipped ChatGPT Images 2.0, workspace agents for enterprise teams, Chronicle, and GPT-5.5—its new flagship model—in an attempt to reclaim the lead in the AI race.

GPT-5.5

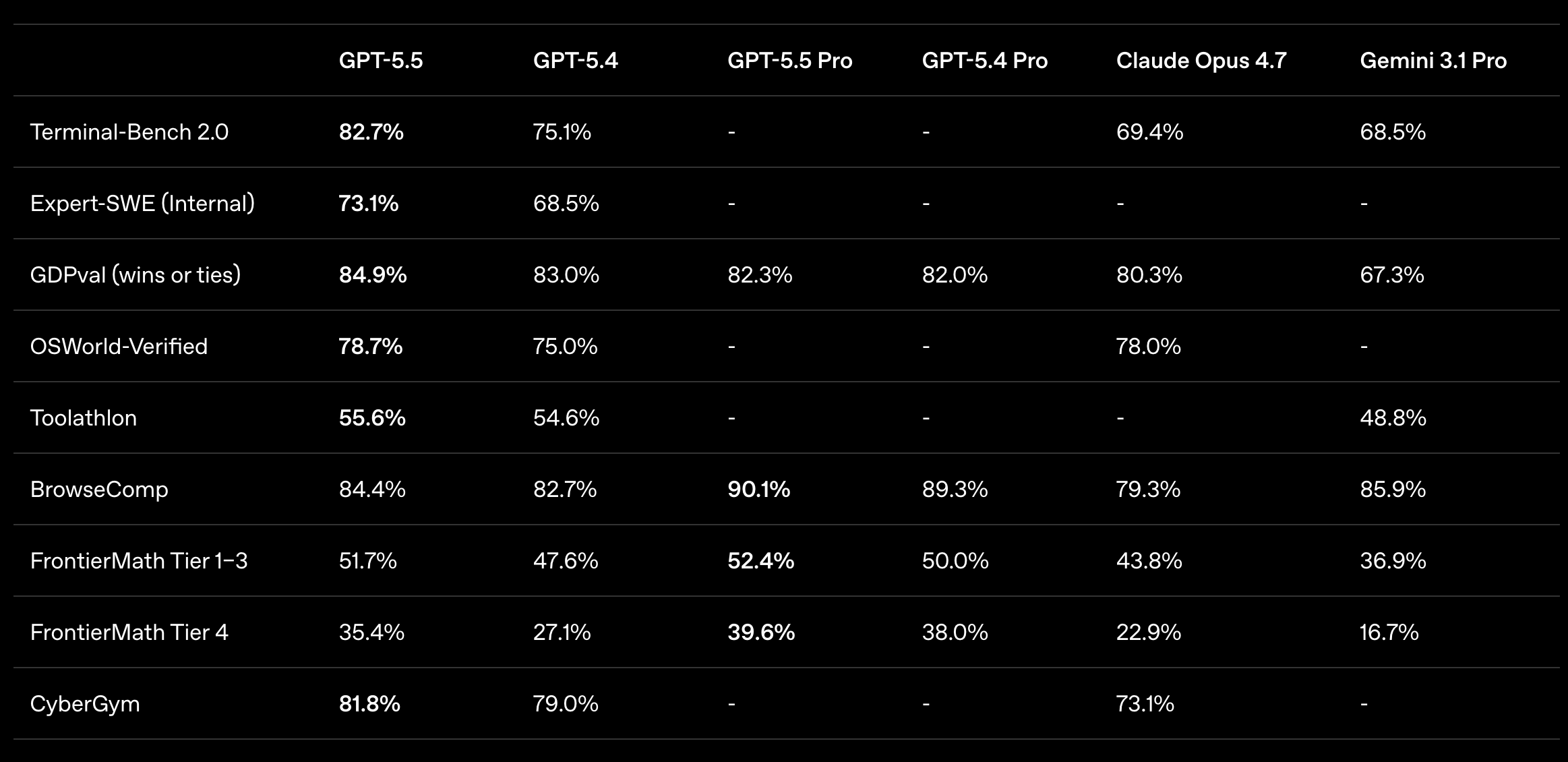

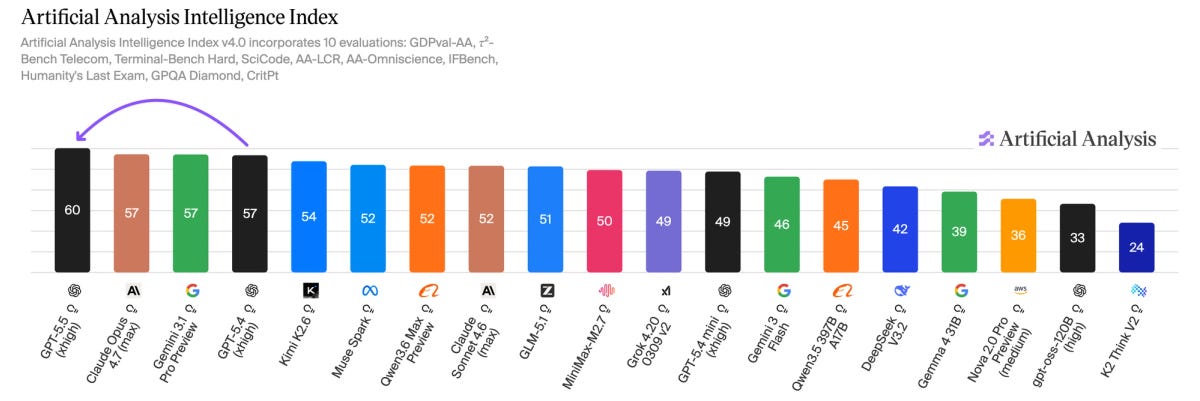

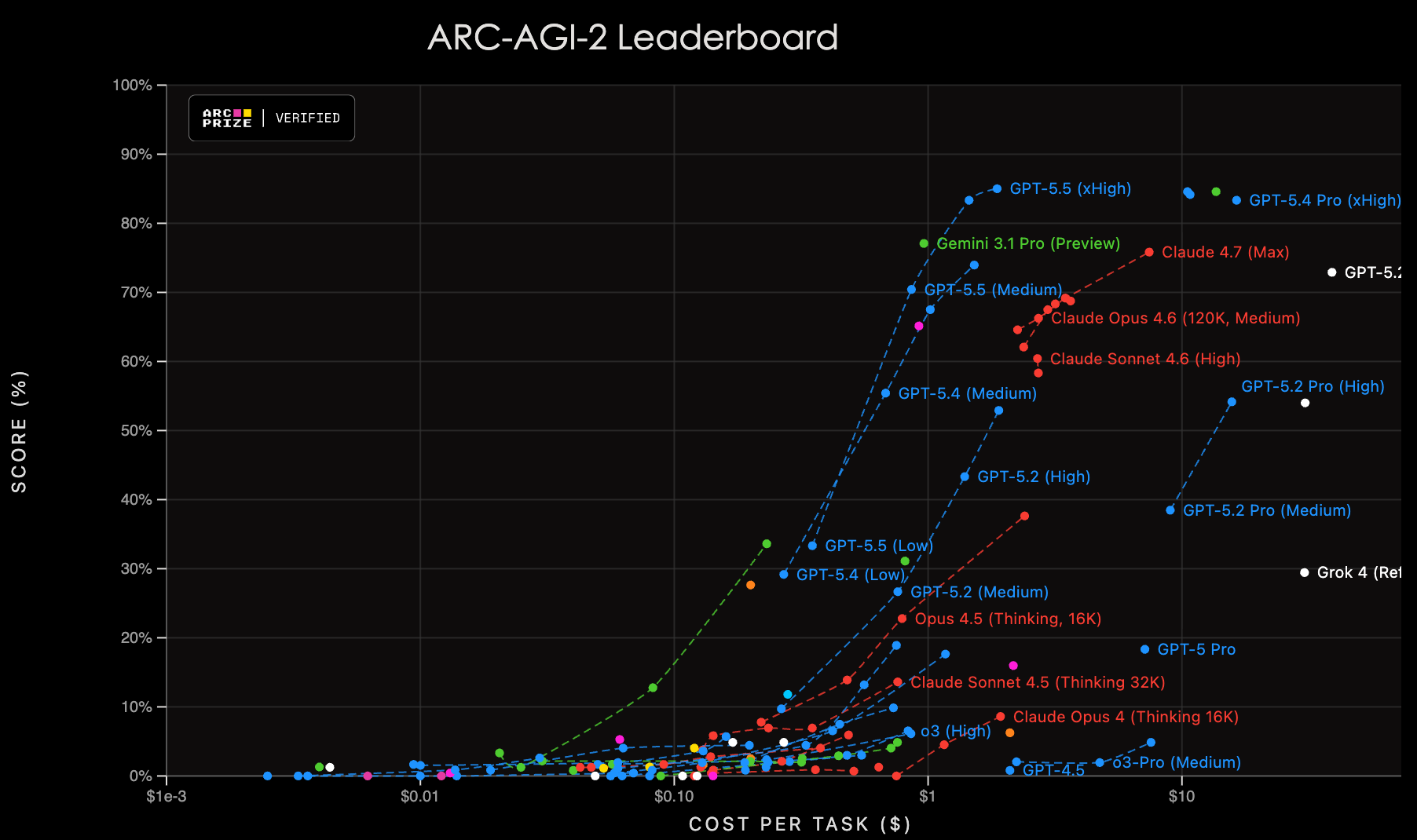

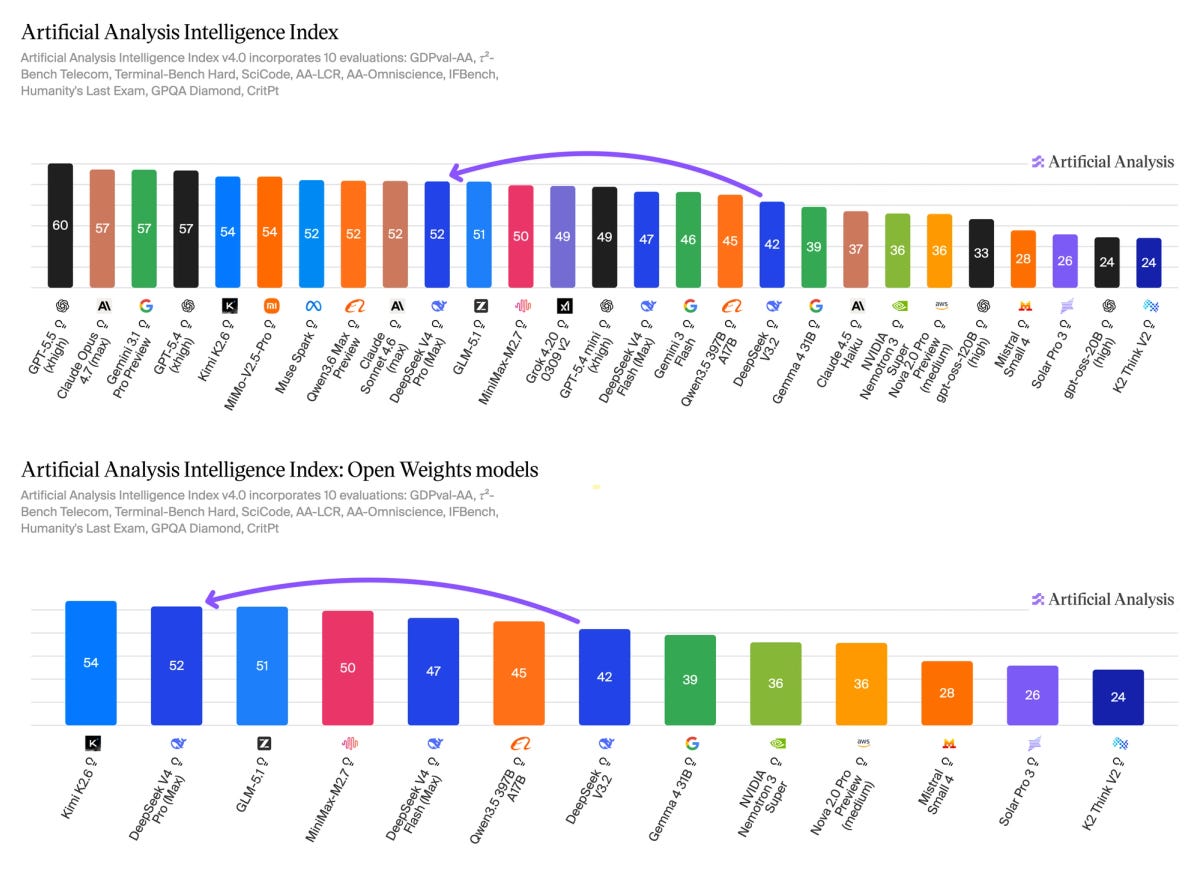

OpenAI calls GPT-5.5 the “smartest and most intuitive” model it has ever built and the benchmarks broadly support that claim. It retook the number-one spot on Artificial Analysis’s Intelligence Index by three points, ending a three-way tie with Anthropic and Google. Long-context retrieval—a genuine weak spot for prior GPT models—saw the biggest jump: performance on a 512K–1M token recall benchmark roughly doubled, from 36.6% to 74.0%. It also set new highs on terminal-based coding and agentic computer use, and tops the ARC-AGI-2 benchmark.

But the lead is uneven. Opus 4.7 still wins on SWE-bench Pro (the coding benchmark OpenAI itself told the industry to adopt because it was less contaminated), on GPQA Diamond, and on Humanity’s Last Exam.

The full list of evaluations can be found at the bottom of the GPT-5.5 announcement page and in the model's System Card. Notably, for the first time in recent memory, OpenAI's official benchmarks include competitor models. Previous announcements compared new releases only to OpenAI's own predecessors; this time, the table includes Opus 4.7 and Gemini 3.1 Pro. Read that as a signal of how tight the frontier has become.

The more uncomfortable number is hallucinations. On the Artificial Analysis Omniscience benchmark, GPT-5.5 got more questions right than any model tested—57%—but hallucinated on 86% of the ones it got wrong, compared to 36% for Opus 4.7. For a model priced at double its predecessor’s API rate ($5/$30 per million tokens, up from $2.50/$15), and pitched squarely at enterprise, legal, medical, and financial workflows, that is a result that should raise some caution.

There is also the safety question. GPT-5.5 is the first OpenAI model classified “High” on cybersecurity capability under the company’s Preparedness Framework—essentially the same tier that made Mythos a headline risk. The UK AI Safety Institute found a universal jailbreak with six hours of expert red-teaming. OpenAI says it patched the vulnerability, but AISI was unable to verify the final configuration.

The cybersecurity comparison with Mythos is worth exploring a bit more. The UK AISI found GPT-5.5 as the strongest model overall on their narrow cyber tasks, and it completed a 32-step corporate network attack simulation—one estimated to take an expert 20 hours—in one out of ten attempts. Mythos reportedly managed three out of ten. The two models are in the same ballpark of offensive capability, yet Anthropic deemed Mythos too dangerous to release publicly. OpenAI shipped GPT-5.5 to every paying subscriber on day one. It is the starkest illustration yet of how differently the two companies weigh the same risk.

The developer reception has been warm, but divided. Cursor's CEO called GPT-5.5 "noticeably smarter and more persistent," and Nvidia shared an internal engineer's line that losing access "feels like having a limb amputated." But not everyone was convinced. Theo Browne, the developer behind t3.chat and one of the most-watched AI voices on YouTube, called GPT-5.5 “smart” but also “weird, hard to wrangle, and too expensive.” His full video review, titled “I don’t really like GPT-5.5…”, acknowledged that it writes the best code he has ever seen from a model, but argued that its “lazy” execution style and poor context-window handling made it frustrating to use in practice. Simon Willison, who had pre-release access, described it as “fast, effective and highly capable” but found that on his long-running SVG benchmark, default GPT-5.5 actually lagged GPT-5.4. It only pulled ahead when reasoning effort was cranked to maximum, at which point token costs ballooned.

Those costs are the other sticking point. API rates doubled to $5/$30 per million input/output tokens—more expensive than Opus 4.7's $5/$25—and OpenAI's defence is that GPT-5.5 uses roughly 40% fewer tokens to reach equivalent results, making the effective increase closer to 20%. Developers are sceptical—a 100% price hike for less than 10% benchmark gain is a hard sell.

GPT-5.5 is available to Plus, Pro, Business, and Enterprise users in ChatGPT and Codex. It is also available via the OpenAI API.

ChatGPT Images 2.0

Days before unveiling GPT-5.5, OpenAI released ChatGPT Images 2.0—an upgraded version of its text-to-image generator, built into ChatGPT. Sam Altman said that the jump in performance and quality is similar to the one from GPT-3 to GPT-5.

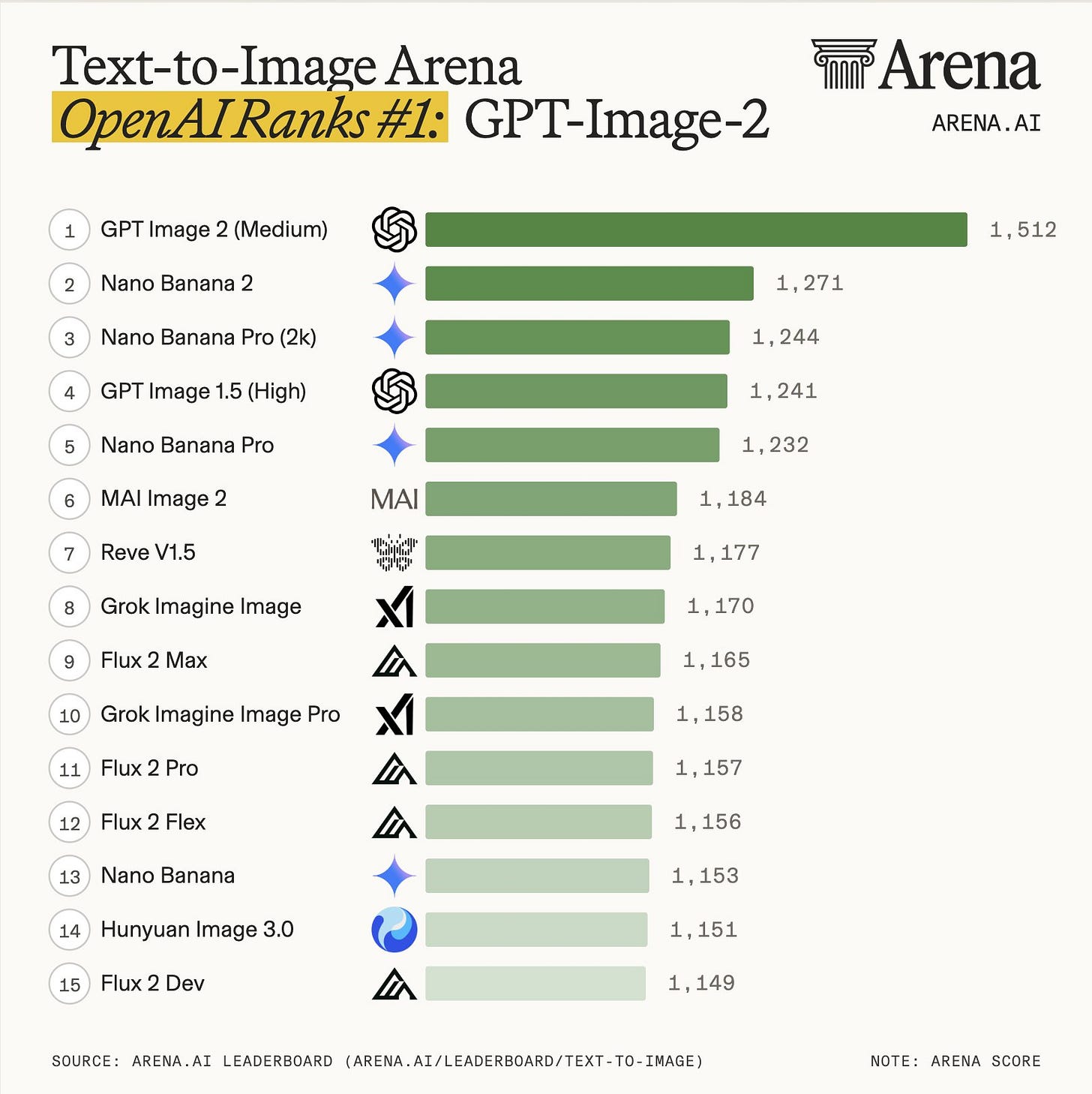

According to OpenAI, the new model is a genuine step-change in instruction following, text rendering—including in non-Latin scripts like Japanese, Korean, and Chinese—and stylistic range, from photorealistic images to manga pages. It is the first OpenAI image model with thinking capabilities. When paired with a reasoning model, it can search the web, generate up to eight distinct images in one go, and self-check its own outputs. On Arena.ai’s image leaderboard, it opened with a roughly 250-point Elo gap over its nearest competitor, Google’s Nano Banana 2.

Unlike GPT-5.5, Images 2.0 is available to all ChatGPT users, including those on the free tier. The advanced thinking features—web search, multi-image generation, and output self-checking—are gated to paid subscribers. Images 2.0 is also available in Codex and the API (as gpt-image-2).

The superapp takes shape

The less flashy launches may matter more in the long run. Chronicle gives Codex a persistent memory built from periodic screenshots of your screen—think Microsoft's controversial Recall feature, but scoped to a coding agent rather than an entire operating system. The idea is that Codex gradually learns how you work, so it can pick up where you left off without re-explaining your project. Workspace agents, available for Business and Enterprise plans, replace custom GPTs with Codex-powered agents that run in the background, plug into Slack and Salesforce, and keep working after you close the browser. Together with Images 2.0 integration inside Codex, these pieces sketch the outline of the “super app” that Greg Brockman and Sam Altman have been promising: a single platform that codes, designs, remembers, and acts on your behalf across tools.

It is an ambitious vision, and it lands at precisely the moment Anthropic is building its own version of the same thing with Claude Code, Claude Cowork, and recently launched Claude Design.

OpenAI is back at the top, but narrowly and at a higher price. GPT-5.5 leads the Artificial Analysis Intelligence Index by three points over Opus 4.7—a real lead, but not a decisive one. Anthropic still wins on some benchmarks, and Opus 4.7's hallucination rate is less than half of GPT-5.5's. The gap at the top is razor-thin, but for the first time in months, OpenAI is ahead.

If you enjoy this post, please click the ❤️ button and share it.

🦾 More than a human

From Biohacking to Healthcare: The Growing Pains of the Longevity Industry

This article explores the growing longevity biotech industry, where companies are developing drugs that target the biological causes of ageing rather than treating age-related diseases one by one. Well-funded startups like Altos Labs and Retro Biosciences are using AI to advance therapies ranging from cellular rejuvenation to clearing out damaged "zombie" cells, with several now entering early human trials. However, the field still faces big hurdles—scientists lack a complete understanding of what drives ageing, there are no agreed ways to measure it, and regulators don't recognise ageing as a treatable condition.

This Beanie Is Designed to Read Your Thoughts

Instead of a bunch of bulky electrodes attached to a head, Sabi is proposing a way more comfortable take on brain-computer interfaces: a beanie or baseball cap packed with up to 100,000 tiny sensors that read brain activity and turn your inner speech into text on screen. The company plans to release the device by the end of the year, initially targeting about 30 words per minute. Key challenges include the natural variation in how different brains work, the need for a device that works straight out of the box, and keeping deeply personal neural data secure.

🔮 Future visions

What would the world look like in a million years? What would humanity become? Would we colonise the galaxy, shed our physical form, or go extinct? Would our descendants even be considered human? In this video, Isaac Arthur answers these questions and more about the deep future.

🧠 Artificial Intelligence

Anthropic’s Mythos Model Is Being Accessed by Unauthorized Users

Claude Mythos, Anthropic's most capable model that is too dangerous to be released to the public, has been accessed by a small group of users who weren't supposed to have it. They got in by combining one member's credentials as a contractor and guessing the model's web address using details leaked in a separate data breach. Anthropic only allows vetted companies to use Mythos because it can find and exploit security flaws in major software, but the group says they've only used it for harmless tasks.

Anthropic and Amazon expand collaboration for up to 5 gigawatts of new compute

Amazon is putting another $5 billion into Anthropic, with up to an additional $20 billion in the future. This builds on the $8 billion Amazon has previously invested. Anthropic, meanwhile, has agreed to spend over $100 billion on Amazon's cloud services over the next ten years. The deal gives Anthropic access to up to 5 gigawatts of computing power, built largely on Amazon's custom AI chips, to keep up with fast-growing demand for Claude. The extra capacity is expected to ease the reliability issues users have been experiencing recently.

Google Expands Anthropic Investment With $40 Billion Commitment

Google plans to invest up to $40 billion in Anthropic, starting with $10 billion now and more to follow if the company hits certain targets. This is part of a bigger $65 billion funding round as Anthropic gears up for a possible stock market listing. The money is needed to pay for the vast computing power behind its fast-growing product, Claude Code, which has helped triple its revenue to $30 billion a year.

Anthropic has surged to a trillion-dollar valuation on secondary markets, overtaking OpenAI

Anthropic is now valued at roughly $1 trillion on private trading markets, Business Insider reports, far surpassing the $380 billion it was worth just three months ago. Buyers are scrambling to get hold of shares, driven by excitement over the company's fast-growing AI coding tool, Claude Code. Meanwhile, interest in rival OpenAI has cooled, with its shares trading near or below its last valuation of $852 billion. Much of the frenzy appears to be driven by fear of missing out rather than hard financial logic.

SpaceX Has Deal for Right to Acquire Cursor for $60 Billion

SpaceX has agreed to buy Cursor, a popular AI coding tool, for $60 billion later this year. The deal is on hold to avoid disrupting SpaceX’s upcoming stock market listing. In the meantime, SpaceX’s vast computing power will replace a funding round Cursor had been planning. The move reflects Elon Musk’s push to catch up with competitors in AI coding tools following his merger of SpaceX with his AI company xAI.

DeepSeek V4 Preview Release

The long-awaited DeekSeek V4 is out. It comes in two variants—DeepSeek-V4-Pro with 1.6T parameters (49B activated) and DeepSeek-V4-Flash with 284B parameters (13B activated), both of which are available on Hugging Face. V4 Pro broadly matches the best closed-source models in coding, maths, and reasoning at a fraction of the cost. Independent testing places it as the second-strongest open-weights model overall, though it still trails in factual accuracy and has a very high hallucination rate. A key technical change is a new attention mechanism that compresses older context and focuses on the most relevant parts, dramatically cutting the compute and memory needed to support the new default one-million-token context window. V4 is also the first frontier model optimised for Huawei’s Ascend chips for inference, though training still appears to rely heavily on Nvidia hardware. The release was delayed by chip migration difficulties, talent departures, and DeepSeek’s decision to seek outside funding for the first time (more on which below).

Tencent, Alibaba in talks to invest in DeepSeek at over $20 billion valuation, The Information reports

According to a report by The Information, Chinese tech giants Tencent and Alibaba are in talks to invest in DeepSeek, which is now seeking a valuation of over $20 billion, up from $10 billion just days earlier. The company, which has never taken outside funding before, wants to raise at least $300 million to help cover the high costs of building advanced AI.

Our eighth generation TPUs: two chips for the agentic era

Google has unveiled its eighth-generation Tensor Processing Units (TPUs)—TPU 8t for training models and TPU 8i for running them. The training chip is about three times faster than its predecessor and can scale to enormous clusters, while the inference chip is designed for the quick, low-latency responses that AI agents need, offering roughly 80% better value for money. Both chips are more power-efficient, run on Google’s own processors, and work with widely used AI software. They are expected to become available later this year.

Jeff Bezos Nears $10 Billion Funding for AI Lab, FT Says

Jeff Bezos is close to raising $10 billion for his AI startup Project Prometheus, valuing it at $38 billion, the Financial Times reported. The company, co-founded with scientist Vik Bajaj, is building AI that understands the physical world to improve manufacturing in areas like aerospace and cars. JPMorgan and BlackRock are among the backers, and the team includes hires from OpenAI and Google DeepMind.

U.S. accuses China of “industrial-scale” campaigns to steal AI secrets

The White House accused China of stealing American AI on a massive scale through the distillation of leading models. US companies like OpenAI and Anthropic say Chinese labs used thousands of fake accounts to extract millions of responses from their systems. The government plans to help US companies defend against such attacks, but has not yet announced any penalties. The timing is notable, coming just before Trump's trip to Beijing next month.

Kevin Weil and Bill Peebles exit OpenAI as company continues to shed ‘side quests’

OpenAI is losing three key leaders—Kevin Weil, Sora creator Bill Peebles, and enterprise tech chief Srinivas Narayanan—as the company scales back experimental projects to focus on business products. Sora, its AI video tool, was costing roughly $1 million a day and was shut down last month, while Weil's science research team is being merged into other groups. Peebles cautioned that cutting back on exploratory work could hurt the company in the long run. The exits are part of OpenAI's shift from big bets towards more immediate commercial goals.

An update on recent Claude Code quality reports

Anthropic has explained the cause of recent Claude Code quality issues reported by users, tracing them to three separate changes: lowering the default reasoning effort from high to medium, a buggy caching optimisation that repeatedly cleared reasoning history from idle sessions, and a system prompt change aimed at reducing verbosity. All three have now been fixed. Anthropic has denied widely circulated claims that it intentionally degraded its models—a theory that gained significant traction among users online.

Meta has hired five founding members of Mira Murati’s Thinking Machines Lab in a systematic talent raid

Five founding members of Mira Murati's AI startup, Thinking Machines Lab, have been picked off by Meta after she turned down a reported $1 billion buyout offer, with one engineer alone allegedly receiving a $1.5 billion pay package over six years.

Cohere and Aleph Alpha announce merger in Berlin, creating a $20 billion transatlantic AI company

Cohere and Aleph Alpha are merging in a deal worth around $20 billion, though Cohere's 90% share makes it essentially an acquisition. The move is driven by Canada and Germany's shared desire to build AI capacity outside US control, with the German government signing on as a key customer. The merger represents a broader push by both countries to reduce their reliance on dominant US AI providers, particularly amid growing trade tensions and concerns over data sovereignty.

Google is in talks with Marvell to build custom AI inference chips as it diversifies beyond Broadcom

Google is talking to chip designer Marvell Technology about building two new AI chips focused on inference, which is fast becoming more expensive than training them. If a deal goes ahead, Marvell would join Broadcom and MediaTek as a third partner in Google's custom chip programme, giving Google more supplier options and reducing its reliance on any single company.

AI toys for children misread emotions and respond inappropriately, researchers warn

Cambridge researchers tested how young children interact with Gabbo, a cuddly toy powered by an OpenAI chatbot, and found it often struggled to understand them, talked over them, and responded poorly to emotions—dismissing a child’s sadness and giving robotic replies to expressions of affection. They warn that this could confuse children at a critical age when they are still learning how to communicate and handle feelings. The team is calling for new safety rules to protect under-fives, arguing that regulators need to think about psychological safety just as seriously as physical safety.

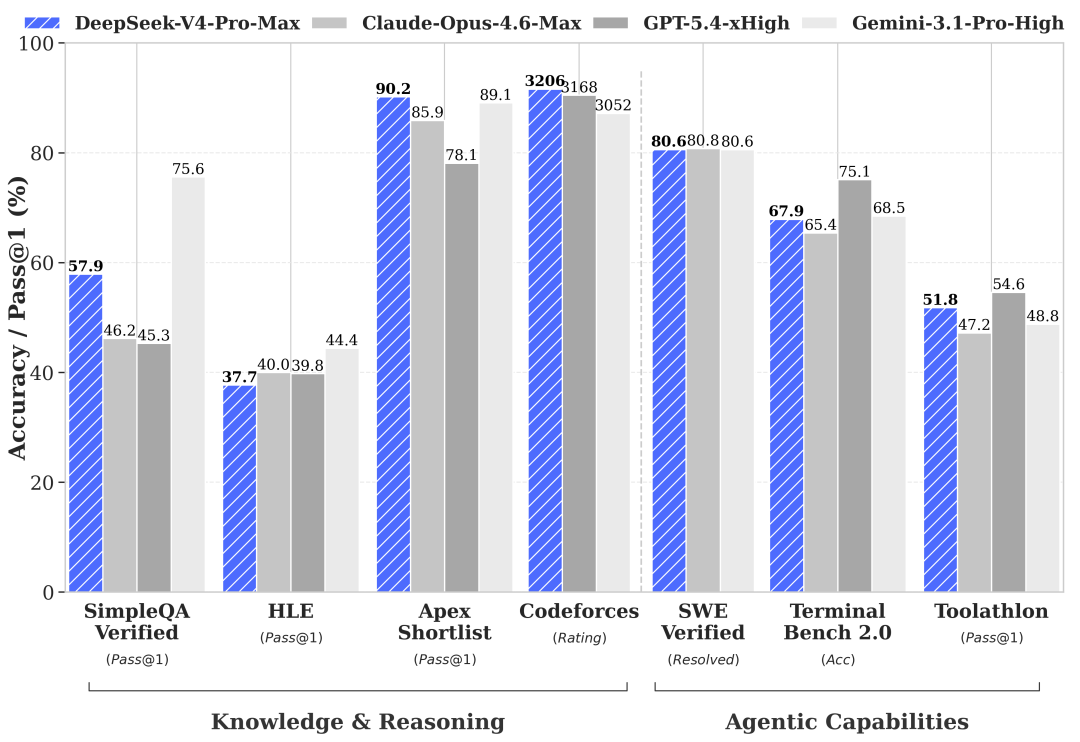

Kimi K2.6: Advancing Open-Source Coding

Moonshot has released Kimi K2.6, a free, open-source AI model built for coding and running complex tasks over long periods. According to Moonshot, it can handle thousands of steps across many hours, and its “agent swarm” feature now coordinates up to 300 mini-agents working in parallel—triple what the previous version could manage. The company says K2.6 achieves state-of-the-art or near-state-of-the-art results across coding and agentic benchmarks, competing closely with GPT-5.4, Claude Opus 4.6, and Gemini 3.1 Pro while remaining open-source. Independent benchmarks confirm these claims and place Kimi K2.6 as the best open-source model available. The release also introduces "Claw Groups," a research preview allowing multiple agents to collaborate in a shared workspace with K2.6 acting as coordinator. If you are interested in learning more about Kimi’s agent swarms, Caleb Writes Code has a good video explaining how they work and what they can do.

Qwen3.6-Max-Preview: Smarter, Sharper, Still Evolving

Alibaba's Qwen team has previewed Qwen3.6-Max-Preview, a proprietary AI model that improves on its predecessor in coding tasks, general knowledge, and following instructions. According to the team's own benchmarks, it tops several coding leaderboards and outperforms rivals, including Claude 4.5 Opus on a number of tests. The model is still a work in progress, with further improvements expected before a full release.

▶️ What Is Yann LeCun Cooking? JEPA Explained Simply (19:50)

This video explains JEPA, an AI architecture promoted by Yann LeCun that learns by predicting abstract representations of data rather than raw pixels or tokens. It covers how JEPA works, why it struggles with a problem called representation collapse, and how recent research is solving that. The key takeaway is that JEPA is best suited to noisy visual domains like medical imaging rather than text, since language models already handle text efficiently.

🤖 Robotics

π0.7: a Steerable Model with Emergent Capabilities

Physical Intelligence has released π0.7, a single robot-control model that can handle many different tasks without needing to be specially trained for each one. What makes it notable is that it can combine skills it has already learnt to tackle new tasks it has never seen before. For example, it can figure out how to use an unfamiliar kitchen appliance or transfer a laundry-folding skill to a completely different robot it was never trained on. This kind of flexible, mix-and-match generalisation is something robotic AI models have struggled with until now.

Robots beat human records at Beijing half-marathon

A robot made by Chinese smartphone company Honor ran a half-marathon in Beijing in just over 50 minutes, beating the human world record by about seven minutes. That's a huge leap from last year's race, where the fastest robot took nearly two hours and 41 minutes. Not everything went smoothly—one robot fell over at the start, and another crashed into a barrier. The race highlights China's growing investment in robotics, with Chinese companies already leading the world in shipping humanoid robots.

▶️ Heard some people like wheels? (1:14)

In this video, Unitree shows what happens when G1, its humanoid robot, get its feet replaced with wheels, rollerskates, or ice skates. Results are impressive.

AI-powered robot beats elite table tennis players

Sony AI's robot Ace has beaten elite table tennis players in three out of five official matches. Ace uses multiple cameras to track the ball's spin and a fast-moving robotic arm to return shots, with its skills refined through thousands of hours of simulated practice. Players found it tough to beat with tricky spin serves, but discovered that simpler shots exposed its weaknesses. The robot's lack of facial expressions or body language also unsettled opponents. While the result is being called a milestone, experts caution that this kind of specialised skill doesn't directly help solve broader challenges in robotics, like handling everyday objects.

▶️ Why Delivery Still Costs So Much (45:41)

In this conversation on the Automated podcast, Serve Robotics CEO Ali Kashani explains why last-mile delivery is broken and how Serve plans to fix it. His company uses small sidewalk robots that are far safer than cars and were doing real deliveries years before self-driving vehicles. He believes slashing delivery costs with robots will ultimately create more jobs than it displaces, just as the shipping container did for global trade, and could reshape cities towards greater walkability, fewer emissions, and stronger local economies.

A&K Robotics Raises $8 Million to Build Autonomous Mobility Infrastructure for Airports

A&K Robotics has raised $8 million CAD to expand production of Cruz, a self-driving pod that carries passengers through airport terminals. The robot is already in use at airports in Vancouver and Madrid, navigating crowds on its own to help people with mobility needs get to their gates.

🧬 Biotechnology

Colossal Biosciences said it cloned red wolves. Is it for real?

This article takes us to eastern Texas, where scientists are studying coyotes that carry DNA from the nearly extinct red wolf. Colossal Biosciences claimed to have cloned red wolves using these animals’ genes, but many researchers dispute that label since the source animals aren’t officially recognised as red wolves. The piece explores how Colossal’s secrecy and bold claims have fractured an already tense research community. It also raises a deeper question: whether conservation should protect species as traditionally defined or shift toward preserving their role in the ecosystem.

This Sam Altman-Backed $1.8 Billion Startup Bets AI Can Get Drugs Through Clinical Trials Faster

Formation Bio, backed by Andreessen Horowitz, Sequoia, and Sam Altman, has raised $615 million to buy drugs that other companies have given up on and use AI to push them through clinical trials faster. Founder Ben Liu argues the real problem in medicine isn’t finding new drugs—it’s the slow, expensive process of testing them. The company says its AI tools can cut trial times by up to half, and it has already sold one drug to Sanofi for around $630 million.

Pancreatic cancer mRNA vaccine shows lasting results in an early trial

A small Phase 1 clinical trial has found that personalised mRNA vaccines may help some people with pancreatic cancer live significantly longer. Eight of 16 patients responded to the vaccine, and six years on, seven of them are still alive—a striking result for a cancer that fewer than 13% of patients survive beyond five years. The vaccine works by training the immune system to hunt down remaining cancer cells after surgery. The trial is still early and very small, but a larger study is now underway, and a separate team is developing a simpler, one-size-fits-all version of the vaccine.

Scientists Revive Failing Cells With Mitochondria Transplants

Researchers have created a system called MitoCatch that helps deliver healthy mitochondria directly to damaged cells. It works by attaching matching proteins to donor mitochondria and target cells so they lock together, letting the healthy mitochondria slip inside and start producing energy. In mice with inherited blindness, the technique rescued dying retinal cells. It's still early days, with big questions about long-term effectiveness, but the approach could eventually lead to treatments for a broad range of diseases linked to failing mitochondria.

NIH-funded breakthrough shrinks CRISPR for precision delivery in the body

Researchers funded by the NIH have found a smaller version of the CRISPR gene-editing tool that could be delivered directly inside the body—something current, larger versions are too bulky to do. Scientists then engineered an improved version that edits genes far more accurately, jumping from less than 10% to over 80% success in lab tests on human cells linked to diseases like cancer and ALS. If further tests go well, this could open the door to treating many more genetic diseases without needing to remove cells from the body first.

💡Tangents

John Ternus to become Apple CEO

After 15 years as CEO, Tim Cook will move to the role of executive chairman, with John Ternus taking over as chief executive of Apple on 1 September 2026. Ternus has spent 25 years at Apple, leading hardware development for products like iPhone, Mac, and AirPods. Under Cook, Apple became a titan in consumer electronics and grew from $350 billion to a $4 trillion company. Cook will remain on the board and continue engaging with policymakers worldwide, while Ternus has signalled he plans to carry forward Apple's existing direction and values.

Tesla to Spend $3 Billion on Chip Fab, Taps Intel for Support

Tesla plans to spend around $3 billion on a research chip factory at its Texas campus to test new manufacturing methods before scaling up. This is the first step in a bigger project called Terafab, which aims to produce enough chips for Tesla, SpaceX, and xAI so they don't have to depend on outside suppliers. Intel is partnering on the effort, with Musk planning to use its latest chipmaking technology. The budget is small compared to what major chipmakers typically spend, reflecting the facility's role as a testing ground rather than a full-scale production site.

Aaron Bastani speaks with Yi-Ling Liu, author of The Wall Dancers, about China's evolving relationship with technology over the past thirty years—from the early internet through mobile payments to AI breakthroughs like DeepSeek. Liu profiles "wall dancers," citizens who creatively navigate the Great Firewall through science fiction, feminism, and queer communities, and traces three generations of increasingly self-confident Chinese tech founders. The conversation also highlights a growing mutual projection of insecurity between China and the West, and how Chinese attitudes towards technology remain more optimistic than the disillusionment now common in the West.

Thanks for reading. If you enjoyed this post, please click the ❤️ button and share it!

Humanity Redefined sheds light on the bleeding edge of technology and how advancements in AI, robotics, and biotech can usher in abundance, expand humanity's horizons, and redefine what it means to be human.

A big thank you to my paid subscribers, to my Patrons: whmr, Florian, dux, Eric, Preppikoma and Andrew, and to everyone who supports my work on Ko-Fi. Thank you for the support!

My DMs are open to all subscribers. Feel free to drop me a message, share feedback, or just say "hi!"