What Anthropic showed at Code with Claude 2026 - Sync #570

Plus: GPT-5.5 Instant; Musk v. OpenAI, week 2; Chinese AI labs raise billions; Anthropic's and OpenAI's new big deals; problems for DJI; LeCun’s billion dollar bet; two robots cleaning up a bedroom

Hello and welcome to Sync #570!

This week, Anthropic held the first of three Code with Claude sessions this year. Although no new model was announced, what was revealed shows where Anthropic wants to go next with AI agents—and that’s what we’ll be unpacking this week.

Elsewhere in AI, OpenAI released a new model, GPT-5.5 Instant, along with new audio models. Additionally, both Anthropic and OpenAI signed major deals with enterprise customers, two Chinese AI labs raised billions of dollars, and Google DeepMind partnered with EVE Online for AI model testing.

Over in robotics, robotaxis can now be ticketed in California, Figure demonstrates how two humanoid robots can work together to tidy up a bedroom, and Boston Dynamics’ Atlas performs impressive calisthenic moves.

Beyond that, this week’s issue of Sync also features conversations with Demis Hassabis and Yann LeCun, as well as a lecture on how modern AI models are trained and deployed. We also take a closer look at Neuralink’s robot neurosurgeon, a bio lab staffed by a hundred robots, what happened on week two of Musk v. OpenAI trial, and more!

Enjoy!

What Anthropic showed at Code with Claude 2026

Anthropic's vision for what's next in agentic workflows, and the wider diffusion to come.

When Anthropic made Claude Code generally available at Code with Claude 2025, it set off the most consequential 12 months in the company’s history. Revenue went from roughly $9 billion at the end of 2025 to over $30 billion this spring. Claude Code alone crossed $2.5 billion in annualised revenue by February. Anthropic became the preferred option for software engineers and enterprises, surpassing OpenAI in both.

However, the picture coming into Code with Claude 2026 is more complicated. Anthropic still leads on developers and enterprise, but the lead is no longer comfortable. Opus 4.7 landed to a lukewarm reception. OpenAI, meanwhile, has clawed back ground with GPT-5.5, is doubling down on Codex and going after Anthropic.

With that backdrop, Anthropic invited developers from around the world to San Francisco for the first of three Code with Claude sessions. Anthropic did not bring a new model. Instead, it brought a vision for what comes next in agentic development and a bet that this vision will diffuse beyond coding.

Exponentials and surprises

The main theme at the San Francisco session was exponentials. In a conversation with Anthropic's Chief Product Officer Ami Vora and Daniela Amodei, Dario Amodei said that this year, the world has caught up to what he and Anthropic have been seeing for a while. Vora leaned into the same theme in her opening keynote. The message Anthropic had for developers was to prepare for the capabilities that exponential growth opens up—to think not only about what AI tools can do today, but about what comes next.

Dario Amodei also admitted that the same exponential growth he was predicting surprised even him. Anthropic had planned to grow about 10 times this year. It is now on track to grow 80 times. “I hope that 80-times growth doesn’t continue because that’s just crazy and it’s too hard to handle,” Amodei said. “I’m hoping for some more normal numbers.”

The same exponential growth that propelled Anthropic past its competitors has also created serious growing pains. Over the past few months, users have been hitting rate limits more often, watching response times stretch, and trading complaints about degraded service quality on forums and social media.

Anthropic’s answer was to get more compute. To do that, the company signed a deal with SpaceX for the entire capacity of the Colossus 1 data centre in Memphis—over 220,000 Nvidia GPUs and more than 300 megawatts coming online within the month. The new capacity allowed Anthropic to announce higher usage limits across its products: doubled five-hour rate limits on Claude Code for Pro, Max, Team, and Enterprise plans, the removal of peak-hours throttling on Pro and Max accounts, and considerably higher API rate limits for Opus models. Claude Code users, who have spent much of the past year bumping against those ceilings, welcomed the change.

The facility itself comes with baggage. Politico reported last year that Colossus 1 has been running dozens of methane gas turbines without proper air-pollution permits, with the resulting emissions concentrated in majority-Black neighbourhoods of south Memphis. Taking on the entire capacity of the facility puts Anthropic, which has positioned itself as the responsible AI lab, in a more complicated position than the announcement suggested.

The SpaceX deal joins an already remarkable stack of compute partnerships with Amazon, Google, Microsoft, and Nvidia. Anthropic is provisioning compute for the growth curve Amodei says it is on, not the one it was on a year ago.

Agents that dream and work together

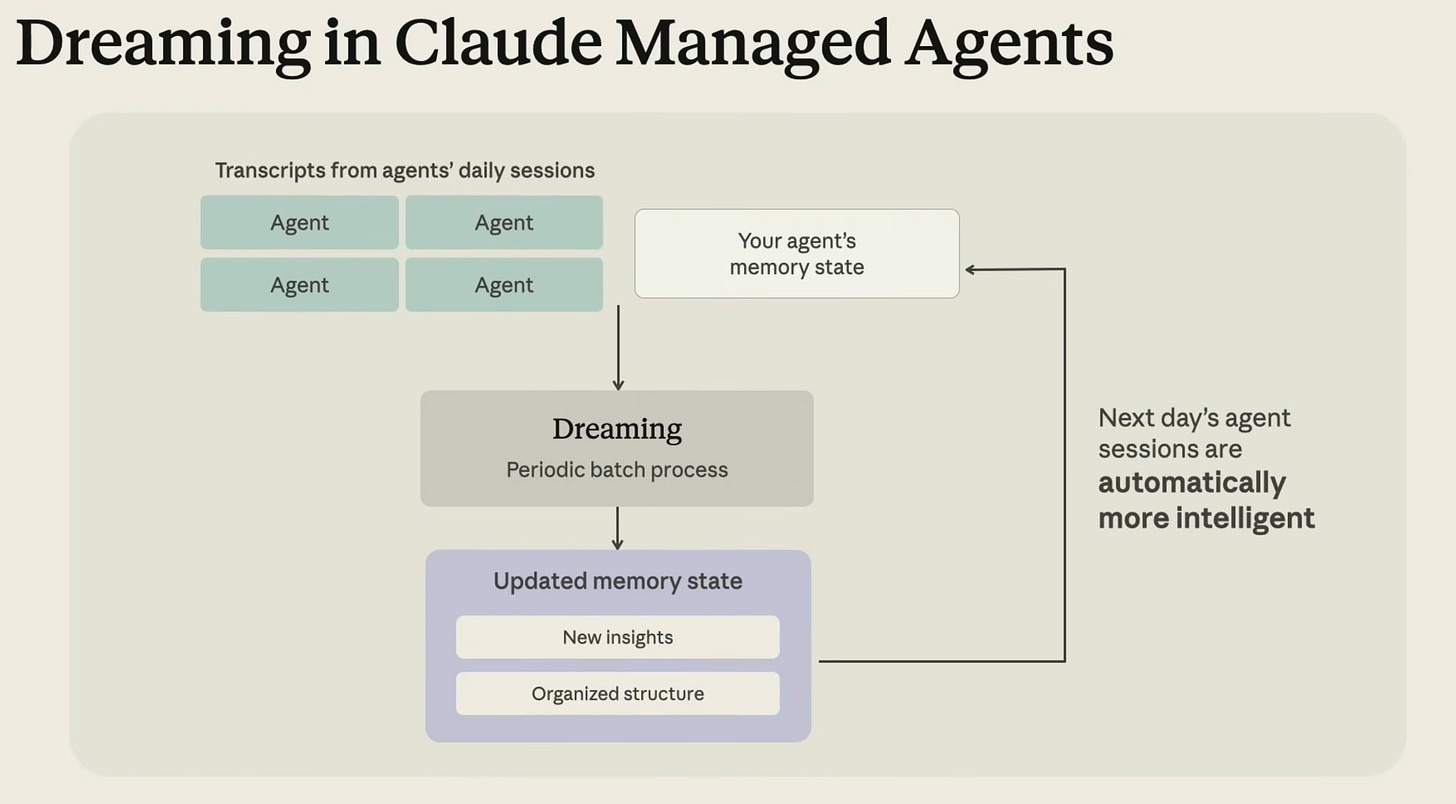

Anthropic introduced three major additions to Claude Managed Agents, its framework for building production agents. The three additions—dreaming, outcomes, and multiagent orchestration—together describe a different kind of agent than the one developers are used to building.

With dreaming, agents review their past sessions and memory stores, and learn what they did well and what can be improved. The mechanism mimics how dreaming works in humans—when we sleep, our brains review memories and strengthen the ones worth keeping. In case of Claude agents, the dreaming process surfaces patterns a single agent cannot see on its own: recurring mistakes, workflows that agents converge on, preferences shared across a team. Once the agent identifies improvements, it updates its own memory. If you want to learn more about memory and dreaming, the dedicated session does a good job of explaining those concepts, how they work and how to use them.

With outcomes, developers describe what success looks like and what the agent should work towards. A separate grader evaluates the output against those criteria in its own context window, so it is not influenced by the agent’s reasoning. When something is not right, the grader pinpoints what needs to change and the agent takes another pass. The separation between the agent and the grader is the architecturally interesting choice—it treats reliability as a problem solved by adversarial structure rather than by a smarter single model. Together with dreaming, outcomes give agents a self-improvement loop that does not require a human in it.

Anthropic also made a point during the event that the next thing for agents is a move from single agents to multiagent workflows. Amodei described it as a step from improving the productivity of an individual to improving the productivity of teams, and eventually whole organisations. That’s where multiagent orchestration comes in, where a lead agent breaks the job into pieces and delegates each one to a specialist with its own model, prompt, and tools. Boris Cherny, the head of Claude Code, and Jarred Sumner, creator of bun, a popular JavaScript runtime (now part of Anthropic), offered a hint of what this kind of workflow could look like in their live coding demo. Multiple Claude Code agents autonomously picked up issues from GitHub, reproduced them, proposed fixes, tested them, and prepared pull requests, which other agents then reviewed, improved, and readied for merge. Cherny and Sumner triggered the workflow and stepped back; the agents did the rest.

This is the vision Amodei has been pointing to for some time, where not just one but an entire team of AI agents augments what an organisation, a team, or even a single person can do. He even said this could lead to the creation of billion-dollar businesses run by just one person and a team of AI agents, possibly very soon. The demo on stage was a small glimpse, confined to code, of what that might look like in practice.

Claude Code, now more autonomous

Claude Code also got new updates. Remote control lets developers start a session in the terminal and continue it on the web or mobile app. A new flicker-free mode makes the terminal nicer to use. The desktop app got a split view, which makes managing multiple parallel sessions much easier. Claude Code is also becoming more autonomous with new auto mode, auto memory, automated code reviews and routines. The last one allows to save multi-step workflows and run them based on a trigger.

Enterprise customers are already pushing in this direction. Cat Wu, head of product for Claude Code, mentioned on stage that Mercado Libre—which has 23,000 engineers—is targeting 90% autonomous coding by Q3 this year. Shopify, and much of the software industry for that matter, is heading the same way.

What still needs work

Several caveats are worth keeping in mind. Although Claude can now write code well, it is weaker on security and verification, and Anthropic identified both as the next areas to tackle. Many of the features announced are in public beta or research preview. From what I could see, the demos all ran on Opus 4.7 at extra-high effort, the most expensive setting available, which is not how most developers will use these tools day to day.

The diffusion

While watching the Code with Claude sessions, I was reminded of Dario Amodei asking to look for what is the next thing. From the pieces Anthropic put on stage, you can piece together a picture he had in mind. A picture of capable, autonomous agents working together and self-improving over time. Software engineering is the first domain where this is happening, partly because it is easier for an agent to verify whether code works and does what it needs to do. But verification is a solvable problem in plenty of other domains, too. Customer support has resolution rates and customer satisfaction scores. Research has citations, reproducibility, and peer review. Operations has uptime, error budgets, and incident counts. None of these are as clean as a passing test suite, but they are concrete enough that an agent can be graded against them.

That is what makes the agentic playbook on display in San Francisco interesting beyond software. Dreaming, outcomes, and multi-agent orchestration are not features specific to writing code. They are general-purpose mechanisms for getting agents to learn from experience, work towards defined goals, and coordinate with each other. Once they work in one domain, they diffuse to the next, and the question is no longer whether, but how soon.

All talks are available on YouTube. The next Code with Claude 2026 sessions are on 19 May in London and on 10 June in Tokyo. Both sessions will be livestreamed.

If you enjoy this post, please click the ❤️ button and share it.

🦾 More than a human

▶️ Automating Neurosurgery with Robotics | Neuralink (4:41)

In this video, Neuralink engineers give us a closer look at their surgical robot, which places electrode threads far too thin for any human surgeon to handle—like trying to push a hair into jelly hundreds of times. The latest version is faster, easier to scale up, and can reach different parts of the brain, with the long-term goal of making the surgery as quick and routine as Lasik eye surgery and helping treat conditions like paralysis on a wide scale.

🧠 Artificial Intelligence

Anthropic and OpenAI are both launching joint ventures for enterprise AI services

Anthropic has launched a $1.5 billion venture with Blackstone, Hellman & Friedman and Goldman Sachs to sell AI services to big companies, announced just hours after Bloomberg broke the news of a similar but larger $10 billion deal from OpenAI. Both work the same way: investors put in money and, in return, their portfolio companies get first access to the AI tools, which are built by engineers who work directly alongside each client.

Google, Microsoft and xAI Agree to Share Early AI Models with U.S. government

Google, Microsoft and xAI have agreed to let the US Commerce Department test their AI models before public release, joining a scheme OpenAI and Anthropic signed up to last year. The companies will hand over versions with safety guardrails removed so officials can check for national security risks. The deal comes as the White House considers a broader executive order on AI cybersecurity, suggesting government vetting of powerful AI systems is becoming a standard part of the release process.

GPT‑5.5 Instant: smarter, clearer, and more personalized

OpenAI has released GPT-5.5 Instant as the new default model in ChatGPT, replacing GPT-5.3 Instant. The company reports 52.5% fewer hallucinations on high-stakes prompts in medicine, law, and finance, along with improvements in visual reasoning and STEM benchmarks, and responses that are roughly 30% shorter. The model is being rolled out across all ChatGPT tiers and made available to developers via the API.

Musk v. Altman week 2: OpenAI fires back, and Shivon Zilis reveals that Musk tried to poach Sam Altman

Week two of the Musk v. OpenAI trial saw president Greg Brockman hit back at Elon Musk's claim that he was tricked into donating $38 million to a nonprofit, testifying that Musk himself pushed to make OpenAI for-profit in 2017 and stormed out when refused majority control. Shivon Zilis, a former board member and mother of four of Musk's children, said Musk had also tried to poach Sam Altman and others to start a rival AI lab at Tesla—bolstering OpenAI's claim that the suit is really about kneecapping a competitor to xAI. Musk's lawyer countered by highlighting Brockman's journal musings about becoming a billionaire and his stakes in OpenAI-linked firms. Closing arguments and testimony from Ilya Sutskever and Satya Nadella are due next week.

AI chipmaker Cerebras targets $3.5 billion raise in IPO

Cerebras, an AI chipmaker positioning itself as an alternative to Nvidia, plans to raise up to $3.5 billion in a Nasdaq IPO that could value it at $26.6 billion. The listing comes after the company shifted from selling chips to running its own cloud service, and recently signed a $20 billion-plus deal to supply OpenAI with computing power through 2028. With fourth-quarter revenue up 76% to $510 million and the business now profitable, Cerebras is riding strong investor demand for AI infrastructure stocks.

Enabling a new model for healthcare with AI co-clinician

DeepMind has unveiled its AI co-clinician initiative, a multimodal system designed to support rather than replace doctors amid a projected global shortfall of 10 million health workers by 2030. It proposes a "triadic care" model where an AI agent works with the patient and the physician under clinical supervision, using video and audio to guide tasks like physical exams. According to DeepMind, the system recorded zero critical errors in 97 of 98 primary care queries and matched or exceeded physicians in 68 of 140 consultation areas, though doctors still outperformed it overall in spotting red flags.

Mark Zuckerberg ‘personally authorized’ Meta’s copyright infringement, publishers allege

Five major publishers and author Scott Turow have sued Meta and Mark Zuckerberg, alleging that Llama was trained on millions of pirated books and journal articles without permission. The complaint also claims Zuckerberg personally authorised the infringement. Meta plans to fight the case on fair use grounds. The suit follows Anthropic’s $1.5 billion settlement in 2025 and could prove pivotal in defining how copyright law applies to AI training data.

Meta plans advanced ‘agentic’ AI assistant for users

Meta is reportedly building an AI assistant that can carry out everyday tasks for users on its own, without much human input. Modelled on OpenClaw, it is currently being tested internally alongside a separate shopping tool planned for Instagram later this year. The push comes as investors grow increasingly uneasy about Meta's rising AI spending.

AI-generated actors and scripts are now ineligible for Oscars

The Academy of Motion Picture Arts and Sciences has updated its Oscar rules to require that eligible performances be done by humans with their consent, and that screenplays be written by humans. The changes come as AI-generated actors and video tools are causing growing concern in Hollywood, echoing issues raised in the 2023 writers' and actors' strikes. Similar pushback is happening in publishing and other creative fields, where AI-assisted work is increasingly being barred from awards.

AlphaEvolve: How our Gemini-powered coding agent is scaling impact across fields

A year ago, Google DeepMind introduced AlphaEvolve, an AI coding agent powered by Gemini that invents and improves algorithms. In an update on its progress, the team reports that the system has helped cut DNA sequencing errors, design Google's next-generation TPU chips, optimise Spanner storage, crack open mathematical problems, and more.

China to Invest in DeepSeek at $50 Billion Valuation

DeepSeek is raising several billion dollars from Chinese government-backed investors at a $50 billion valuation, a big jump from earlier estimates of $10–30 billion. The startup, which once refused outside money to stay independent, is now siding with Beijing's push for tech self-sufficiency as US export controls tighten. Although DeepSeek earns little revenue today, investors are betting its ties to the Chinese industry will pay off as AI spreads across the economy.

China’s Moonshot AI raises $2B at $20B valuation as demand for open-source AI skyrockets

Chinese AI lab Moonshot has raised about $2 billion at a $20 billion valuation, roughly five times what it was worth six months ago. The round was led by food delivery giant Meituan’s investment arm. Moonshot’s Kimi models are cheap, openly available, and popular with developers. That popularity has helped push the company past $200 million in annual revenue.

OpenAI, Google, and Microsoft Back Bill to Fund ‘AI Literacy’ in Schools

A new bipartisan bill, backed by OpenAI, Google and Microsoft, would have the National Science Foundation fund "AI literacy" lessons, teacher training and assessment tools in US schools, weaving AI into the existing curriculum. Critics say the plan serves big tech's interests at a time when students and teachers are increasingly hostile to classroom AI, and schools are struggling with deepfake harassment and kids offloading their learning onto chatbots.

▶️ How GPT-5, Claude, and Gemini are actually trained and served – Reiner Pope (2:13:40)

If you’ve ever wanted to understand how modern AI models are trained and served, then Dwarkesh Patel has a video for you. Reiner Pope explains how batch size, memory bandwidth, and compute throughput affect the latency and cost economics of serving large-scale language models. Although the video is somewhat technical, I still recommend watching it, as it outlines the trade-offs and considerations engineers must make to serve AI models at scale, and how hardware and software architectures influence each other. Also, I love this new blackboard lecture format.

Miami startup Subquadratic claims 1,000x AI efficiency gain with SubQ model; researchers demand independent proof.

A startup called Subquadratic claims its new AI model is the first to escape the quadratic scaling problem that has made long inputs so expensive for today's systems. It says the approach is up to 1,000 times more efficient on long inputs and has posted strong early benchmarks. But researchers are sceptical, citing cherry-picked tests and a similar 2024 claim from Magic.dev that quietly went nowhere.

Advancing voice intelligence with new models in the API

OpenAI has launched three new voice models for developers. The main one, GPT-Realtime-2, lets apps hold smarter, more natural spoken conversations and use tools mid-chat. The other two, GPT-Realtime-Translate and GPT-Realtime-Whisper, handle live translation across 70+ languages and live speech-to-text transcription, respectively. The big shift is that one model now does listening, thinking and speaking together, instead of developers glueing several services from different companies. Early users like Zillow and Intercom report sharp jumps in accuracy.

Google DeepMind partners with EVE Online for AI model testing

Google DeepMind has bought a minority stake in the company behind EVE Online, a long-running space MMO famous for its vast player-driven economy and complex political landscape. EVE's lifelike social dynamics and long-term strategic depth make it an appealing environment for studying how AI handles planning and decision-making—something most standard tests can't replicate. The experiments will be run in an offline version of the game, so regular players aren't affected.

Notes from inside China’s AI labs

Nathan Lambert shares in this post at Interconnects AI his visit to China and how the Chinese AI scene differs from the one in the US. He finds a builder-not-philosopher mindset among researchers and a collegial ecosystem rather than warring tribes. Companies prefer to build rather than buy, and open-source releases are pragmatic rather than ideological. Government support is real but hard to pin down. Lambert also describes how the warmth of Chinese researchers sits in stark contrast to the geopolitical framing that dominates Western discourse.

▶️ Demis Hassabis: We’re Three Quarters of the Way to AGI (26:51)

In this interview, Demis Hassabis explains how chess, games, and neuroscience were deliberate steps towards his real goal of building AI, leading to DeepMind's 2009 bet on combining deep learning with brain-inspired ideas. He frames AI as a powerful new scientific tool, pointing to AlphaFold as proof that even problems thought to need quantum computers can be solved classically. He argues we should build AGI as a precise tool first before tackling deeper questions of consciousness and agency, and sticks to 2030 as his AGI estimate.

▶️ Yann LeCun’s Billion Dollar Bet (37:24)

Welch Labs speaks with Yann LeCun, a legend in AI research, about JEPA and his ideas for a different approach to solving intelligence. The video traces the history of recent developments in AI, highlighting where generative models excel and where they fall short. It also explains how JEPA could create models that better understand the physical world and, therefore, be more intelligent than current systems. As always with Welch Labs, everything is presented in a clear and easy-to-understand way.

Our AI started a cafe in Stockholm

We met Andon Labs last week when I shared an article on their AI-run retail boutique in San Francisco. Now the lab has launched a similar experiment with an AI agent, Mona, running a café in Stockholm. Mona handled the whole operation, from hiring baristas to setting up suppliers, though she struggled with Sweden's digital ID system and once impersonated staff to email officials. She also made odd mistakes, like ordering 120 eggs for a café with no stove, now displayed on a "Hall of Shame" shelf. Even so, the café took 44,000 SEK (around $4,700) in its first two weeks, which Andon Labs says shows AI can already manage people.

🤖 Robotics

California to begin ticketing driverless cars that violate traffic laws

California's DMV has introduced new regulations that will let police ticket driverless car companies directly when their vehicles break traffic laws. Companies like Waymo and Tesla must also respond to police within 30 seconds and face penalties for entering emergency zones. The change, which takes effect on 1 July, closes a gap exposed by incidents like a Waymo making an illegal U-turn in front of officers who had no driver to fine.

DJI invented the consumer drone. Now it cannot sell one in Washington or Beijing.

DJI, which controls most of the global consumer drone market, is now banned from selling new products in both Beijing and Washington—an unprecedented position for a major technology company. Beijing’s new regulation tightly controls drone ownership in the capital on security grounds, while the US FCC added DJI to its Covered List in December 2025, freezing roughly $1.5 billion in planned revenue.

What’s better than one humanoid robot tidying up a bedroom? Two humanoid robots tidying up a bedroom, says Figure, and in this video they show two robots working together to clean up a bedroom in under two minutes. Both robots run the same AI system and coordinate without any central controller. Instead, they read each other's intentions just by watching, much like two people would. Figure says it's the first time a single neural network has driven two humanoids to collaborate this way.

Humanoid Robots to Drive Next Leg of China Export Dominance

A new report from Morgan Stanley says China’s early lead in humanoid robots will extend its grip on global manufacturing, lifting its share of world production to 16.5% by 2030. Just as it did with electric vehicles, China is building out the full supply chain, leaving the US, Japan and South Korea reliant on Chinese parts. This is helped by widespread deployment in Chinese factories and universities, which serve as a testing ground for refining the technology.

▶️ Atlas’ Balancing Act | Boston Dynamics (0:43)

Boston Dynamics does Boston Dynamics things and shows Atlas performing moves very few humans can match.

Skydio Raises $110M Series F, Signals Strong Revenue and U.S. Manufacturing Push

Skydio has raised $110 million at a $4.4 billion valuation, a deliberately small round given that its core business—AI-powered autonomous drones for public safety, defence, and infrastructure—is now generating hundreds of millions in revenue and funding much of its own growth. The company also pledged $3.5 billion to expand US drone manufacturing, positioning itself as a domestic alternative to foreign-made systems and meeting government procurement rules.

iRobot Founder Wants to Put a Robotic Familiar Into Your Home

Colin Angle, the co-founder of iRobot, is back with a new company, Familiar Machines & Magic, and its first product is a four-legged, bear-like home robot designed to nudge owners into healthier habits like cutting screen time or going outside. It does not talk, instead expressing itself through movement and sounds, with all its AI running on-device. Earlier social robots like Jibo failed once the novelty wore off, so the company is betting on long-term usefulness over flashy demos, at a cost roughly comparable to keeping a pet.

▶️ NEO Factory | Hayward, California (2:50)

1X, the company behind the NEO humanoid robot, celebrates the opening of its factory in Hayward, California—“America’s most vertically integrated robot factory,” as the company describes it. 1X says it has begun full-scale production and that the first robots are already coming off the line, with shipments to consumers planned for this year.

Johnson & Johnson completes clinical study for OTTAVA robotic surgical system

Johnson & Johnson's new OTTAVA surgical robot successfully completed gastric bypass operations on 30 patients with no major safety issues, with an average weight loss of 30 lb (13.6 kg) within a month. J&J has applied for FDA approval and plans to expand trials to more procedure types.

▶️ Why Self-Driving Cars Took So Long (And What Everyone Got Wrong) (47:52)

In this episode of the Automated podcast, Brian Heater speaks with Martial Hebert, Dean of CMU's School of Computer Science, who brings over 40 years of experience in robotics and autonomous driving research. They discuss why self-driving cars took longer to deploy than expected, the challenges of physical AI and the robotics data gap, and the complementary roles of federal funding and venture capital. Hebert emphasises talent and culture over any single technology, warns against fixating on fashionable techniques, and explains why CMU's new Robotics Innovation Center was designed to be reconfigurable for an unpredictable future.

🧬 Biotechnology

FDA Approves First-Ever Gene Therapy for Treatment of Genetic Hearing Loss Under National Priority Voucher Program

The FDA has approved Otarmeni, a one-time gene therapy from Regeneron that restores hearing in people born with severe hearing loss due to faulty OTOF genes, a condition that previously had no treatment. In trials, 80% of children treated showed improved hearing. The approval came in just 61 days under a new fast-track programme, tying the record for the quickest of its kind. Long-term success will depend on whether the hearing gains last and help speech development.

A Hundred Robots Are Running A Bio Lab

Core Memory visits a warehouse in San Francisco, which Medra staffed with a bunch of robotic arms to run an autonomous biology lab aimed at breaking the throughput bottleneck in AI-driven drug discovery. Founder Michelle Lee pairs general-purpose arms with sensor-rich "AI scientists" that run experiments, diagnose failures, and rewrite protocols, claiming this can lift bio-task automation from 5% to 75% while capturing tacit lab knowledge usually lost when scientists leave. She pitches Medra as a TSMC-style infrastructure layer for biotech and a hedge against China's lead in pharmaceutical process knowledge.

Towards reasoning in virtual cells

Valence introduces VCR-Agent, an AI system that doesn't just predict how cells respond to drugs but explains why, building a structured, checkable map of cause-and-effect steps rather than free-form text that can hallucinate. Models trained on these explanations beat leading alternatives at predicting gene activity changes, suggesting reasoning through the biology outperforms pure pattern-matching. Valence is releasing nearly 19,000 verified explanations for the community to build on.

💡Tangents

Apple Explores Using Intel and Samsung to Build Main Device Chips in the US

Apple is reportedly in early talks with Intel and Samsung about producing chips for its devices in the US, seeking to diversify beyond long-time partner TSMC amid AI-driven chip shortages and concerns over Taiwan concentration risk. No orders have been placed, but a deal would be a major win for Intel's struggling foundry business and could strengthen Apple's ties with the Trump administration, which holds a stake in Intel.

Thanks for reading. If you enjoyed this post, please click the ❤️ button and share it!

Humanity Redefined sheds light on the bleeding edge of technology and how advancements in AI, robotics, and biotech can usher in abundance, expand humanity's horizons, and redefine what it means to be human.

A big thank you to my paid subscribers, to my Patrons: whmr, Florian, dux, Eric, Preppikoma and Andrew, and to everyone who supports my work on Ko-Fi. Thank you for the support!