The Legacy of Move 37

Ten years after AlphaGo defeated Lee Sedol, the Go community has lessons for everyone living with superhuman AI

March 2016. Seoul, South Korea. Millions around the world tuned in to watch a game of Go. On one side of the board sits Lee Sedol, one of the greatest players the game has ever produced—a genius renowned for his creative, fighting style. His opponent has already beaten a European champion 5-0 and is here to challenge the very best. But his opponent is not human, and it is here to make history.

And it did. Ten years ago, DeepMind's AlphaGo defeated Lee Sedol 4–1, with consequences that would reach from Seoul to Silicon Valley to Beijing. Go, an ancient game famous for its beauty and complexity, was mastered not by a human but by a machine. AlphaGo stands alongside Deep Blue’s victory over Garry Kasparov in 1997 and the release of ChatGPT in late 2022 as a defining milestone in AI. It proved that artificial intelligence had arrived, sparked a global AI race, transformed how humans play the ancient game of Go, and in the process offered the first hints of how we can coexist with superhuman AI.

How DeepMind solved Go

Go is an incredibly complex game. There are 10170 (one followed by 170 zeroes) possible board configurations. To put that number in perspective, the number of atoms in the observable Universe is estimated to be 1080, or one followed by eighty zeroes. If you want to assign one atom to one board configuration, you would need vastly more than the number of atoms in the observable Universe. And then, in this enormous space of all possible solutions, you have to find the sequence of boards that leads to victory.

Earlier Go programs relied heavily on handcrafted knowledge and, later, Monte Carlo tree search, but even the best of them played at an amateur level. Most experts expected superhuman Go play to be at least a decade away. DeepMind proved them wrong.

DeepMind’s answer was to combine three techniques into a new architecture. First, deep neural networks treated the 19×19 Go board as an image. Two networks worked in tandem: a policy network that predicted the probability of each possible move, and a value network that estimated who was winning from any given position. The policy network was initially trained on 30 million positions from human games, learning to predict what an expert would play. It was then refined through reinforcement learning. AlphaGo played millions of games against previous versions of itself, gradually discovering strategies that went beyond what it had learned from humans.

Second, Monte Carlo tree search used these neural networks to focus computational effort on the most promising moves rather than trying to explore every possibility. Think of it as the difference between reading every book in a library and having a knowledgeable guide point you to the right shelf. The neural networks acted as that guide, telling the search where to look.

Third, a fast rollout policy—a lightweight, stripped-down evaluator—could assess positions in just microseconds, enabling rapid simulations that complemented the deeper but slower neural network analysis.

The result was a system that learned to evaluate positions and select moves through experience. Where Deep Blue searched over 200 million positions per second, AlphaGo evaluated far fewer positions but searched smarter, guided by something that could be described as an artificial intuition.

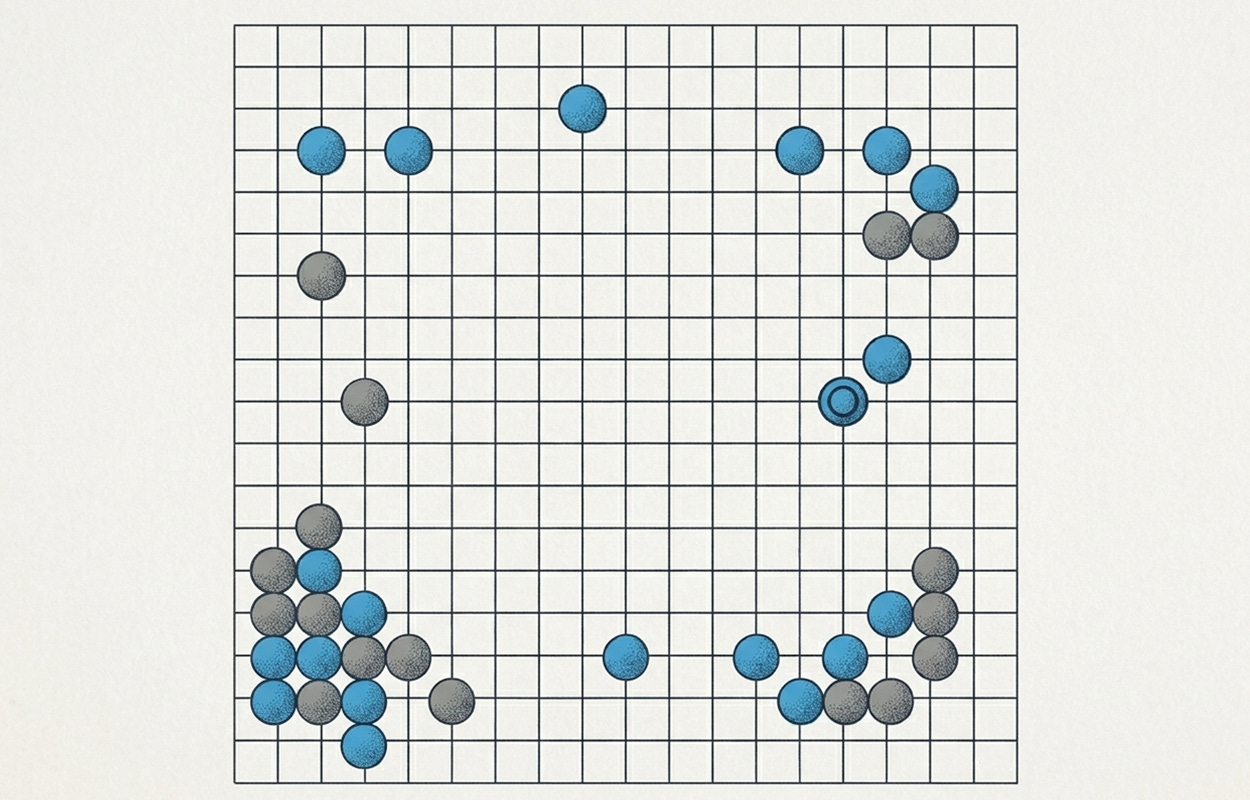

That artificial intuition revealed itself most strikingly in Game 2. On now legendary move 37, AlphaGo played a move that professional commentators initially dismissed as a mistake. It was a move with roughly a 1-in-10,000 probability of being played by a human. It proved devastatingly effective. Fan Hui, the European champion who had been advising the DeepMind team, watched in awe: “It’s not a human move. I’ve never seen a human play this move. So beautiful.”

Lee Sedol answered with an equally impressive move in Game 4. Down three games in the series and facing elimination, he played Move 78—dubbed “God’s Touch” in Korean—a wedge so brilliant and unexpected that it was itself a 1-in-10,000 play. AlphaGo’s evaluation collapsed, leading to a cascade of errors and handing Lee Se-dol the only victory any human would ever claim against AlphaGo. If Move 37 showed what a machine could see that humans could not, Move 78 showed that human creativity, pushed to its absolute limit, could still find what a machine had missed.

After defeating Lee Sedol, an updated version of AlphaGo, known as AlphaGo Master, appeared on Korean and Chinese Go servers. On a Chinese FoxGo server, it defeated 60 consecutive top professionals, including world number one player Ke Jie, three times. At the Future of Go Summit in Wuzhen, China, in May 2017, AlphaGo Master defeated Ke Jie 3-0 and beat a team of top five Chinese professionals playing collectively. After the summit, AlphaGo was retired from competitive play. In its short run, AlphaGo went 74–1 in its public games. Lee Sedol was the only human to beat it. In recognition of DeepMind’s achievement, the Korea Baduk Association, South Korea's governing body for professional Go, awarded AlphaGo an honorary 9-dan, the highest professional certification in Go.

Although AlphaGo was retired, DeepMind continued developing even more impressive models. In October 2017, the London-based AI lab unveiled AlphaGo Zero, which learned Go entirely from self-play with zero human data. After three days of training, it defeated the version that beat Lee Sedol 100-0. After 40 days of self-play, it surpassed AlphaGo Master. Two months later, DeepMind followed up with AlphaZero, an even more powerful model that not only mastered Go but also mastered chess and shogi, given only the rules of each game. AlphaZero achieved superhuman performance in chess within hours and in Go within days.

AI’s Sputnik Moment

AlphaGo’s victory sent shockwaves across the world.

Just two days after the Lee Sedol match, South Korean President Park Geun-hye announced ₩1 trillion (approximately $863 million) in AI research funding over five years. South Korea followed with an additional $2 billion commitment by 2022 and launched a sovereign AI initiative in 2024. Canada became the first country in the world to launch a national AI strategy in 2017, committing C$125 million to research and talent. Japan launched its AI Technology Strategy Council the same year. In 2018, France pledged €1.5 billion for AI development under President Macron. By the end of 2018, over a dozen countries had published national AI strategies—a rarely seen wave of near-simultaneous government mobilisation around a single technology.

But it was China where AlphaGo made the biggest impact. Go, known in China as weiqi, is an ancient Chinese invention and one of the four classical arts of the Chinese scholar. A Western-developed AI defeating the world’s best at China’s own game carried deep cultural resonance. Kai-Fu Lee, former president of Google China, described the event as “China’s Sputnik moment” in his 2018 book AI Superpowers. What followed was a focused plan to transform China into an AI superpower. Less than two months after Ke Jie’s defeat in Wuzhen, China’s State Council issued the “New Generation Artificial Intelligence Development Plan.” The plan set a three-phase strategy to achieve global AI leadership by 2030. The money moved fast. According to the South China Morning Post, Chinese equity funding for AI startups jumped from 11% of global investment in 2016 to 48% in 2017, surpassing the United States by 10 percentage points. Over the next few years, a new generation of AI researchers, founders and startups emerged across the country.

Looking back, we can see that the Chinese plan is bearing fruit. In January 2025, a then little-known Chinese startup called DeepSeek released its R1 reasoning model—open-source, built for a fraction of what the US companies spent on their models while matching their performance and being more efficient to run. It briefly overtook ChatGPT as the top free app on Apple's US App Store and wiped roughly $1 trillion from US AI-exposed tech stocks in a single day. DeepSeek was not an accident. It emerged from the years of investments and from the AI ecosystem that China had been building since the “AlphaGo shock”—one that now produces world-class researchers, models and products despite being constrained by US chip export controls.

How AlphaGo changed Go

In Go, there is a concept known as jōseki—standardised sequences of moves, similar to opening theory in chess, developed over more than 2,000 years and codifying what generations of masters considered optimal play. For centuries, this understanding was passed down unquestioned from master to disciple. AlphaGo demonstrated that many of these human fundamentals were, in fact, suboptimal or merely prejudiced assumptions.

A 2023 study published in the Proceedings of the National Academy of Sciences (PNAS) analysed over 5.8 million Go moves from professional play between 1950 and 2021. The researchers found that human decision quality had stayed largely the same for 66 years. After AlphaGo’s victories, both decision quality and novelty scores shot up dramatically. Crucially, memorisation alone could not explain the improvements. The gains began approximately 18 months after AlphaGo’s victory, roughly coinciding with the release of Leela Zero. This open-source Go engine allowed players to build interactive analysis tools showing the AI’s reasoning behind move choices. This enabled deep, interactive learning rather than mere copying. Players, with the help of AI tools, were discovering novel moves that contributed more to winning than non-novel ones.

AI has fundamentally reshaped how professional Go is played, trained, and experienced. Players now train to replicate AI’s moves as closely as they can, even when the machine’s thinking remains mysterious to them. It is essentially impossible to compete professionally without using AI. South Korean Go commentator Park Jeong-sang summarised the shift bluntly: “AI has changed everything. Fundamental moves that were once considered common sense aren’t played at all today, and techniques that didn’t exist before have become popular.” The overall level of mastery went up, but the game lost personality. Before AlphaGo, players’ styles were diverse and distinctive. Go fans could identify who played a particular game just by looking at the game records. Lee Sedol played like a jazz musician—throwing the game into disorder and thriving in the turbulence. Ke Jie played like a dancer, finding elegant lines through positions others found too tangled. That distinctiveness has faded. The opening phase of the game, once a canvas for abstract thinking and creativity, is now largely standardised. Over a third of moves by top players replicate AI’s recommendations, and the first 50 moves of each game are often identical to what AI suggests.

The older generation of Go masters suddenly woke up playing a different game from the one they fell in love with. Lee Sedol retired three years after his match with AlphaGo. The game he had spent his life mastering had become an exercise in following a machine’s recommendations, and he could no longer find joy in it. Ke Jie has been vocal about the downsides, too, telling a Chinese news outlet in 2021: “I feel the exact same way as the fans watching. It’s very tiring and painful to watch.”

But while the old guard mourned what was lost, a new generation saw only what was gained. Shin Jin-seo, currently the world’s top-ranked Go player, represents the AI-native generation. Every morning, he sits at his computer and opens KataGo, an open-source Go engine that is faster and sharper than the original AlphaGo. He has earned the nickname “Shintelligence” for how closely his moves mirror AI’s. According to a 2022 study by the Korean Baduk League, his moves match AI’s recommendations 37.5% of the time, well above the 28.5% average among professional players. He has trained on more advanced AI programs than AlphaGo ever was, and is optimistic that he could now defeat the original AlphaGo by targeting its known weaknesses.

If AI standardised how the game is played, it also democratised who gets to play it at the highest level. For decades, the best training meant studying under top male players in circles that were difficult for women to break into. AI levelled the playing field. In 2022, Choi Jeong—nicknamed “Girl Wrestler” for her fierce, combative style—became the first woman to reach the finals of a major international Go tournament. Two years later, Kim Chae-young won the Korean Go League’s postseason playoffs as the only female player in the tournament. Kim described what it was like to relearn the game through AI: “It seems like it’s thinking in a higher dimension. It’s less about rationally thinking through each move, but more about developing a gut feeling—an intuition.” But AI didn’t just change how she played. It changed how she saw her competitors. “Before, I couldn’t gauge just how strong top male players were—they felt invincible,” she told the MIT Technology Review. “Now, I know that they make mistakes, and their moves aren’t always brilliant. AI broke the psychological barrier.”

Learning to coexist with a superhuman AI

The Go community’s experience is worth studying carefully, because they are ten years ahead of the rest of us. They have lived with a superhuman AI since March 2016. They have wrestled with the questions that every profession is now beginning to ask: How do you stay motivated when a machine is better than you? How do you train the next generation? How do you preserve what makes your craft meaningful?

The older generation of Go masters learned their craft in one world and woke up in another. Some adapted. Many could not. Lee Sedol retired. This was not a failure of character—it was the reasonable response of someone who had spent a lifetime mastering a game that no longer existed in the form he loved. The same is coming for every other profession. A new, AI-native generation is already entering the stage—people who use AI tools as naturally as previous generations used the internet. The question is not whether this transition will happen. It already is. The question is how to navigate it.

I am a software engineer. I have been writing code since I was 14 years old. I love finding elegant solutions expressed in code. But when GPT-4 came out, I saw the writing on the wall. It was a matter of time before AI would write code better than I. That time has come. But just as Go players use AI to deepen their understanding of the game, I use AI to become a better programmer. I read the code it generates. I ask for different approaches. I ask why it made the choices it did. Sometimes I find solutions I would never have considered—a data structure I’d never have reached for or an architectural pattern that solves the problem in a cleaner way. Other times, I see that my instinct was better. The point is not to compete with the machine. The point is to use it as a lens that reveals what I do not yet understand about my own craft.

The same approach can be applied to other areas of human endeavour, from mathematics and biology to art and engineering. However, I won’t pretend this story has a clean ending. Some people will be left behind—not because they lack talent, but because the craft they mastered is no longer the craft the world needs. Some art will be optimised away. The Go community has already lived through both. But they have also shown that it is possible to use a superhuman tool to grow rather than shrink—to discover moves you would never have found alone, to see your own blind spots, to push your understanding past what you thought was your ceiling. That is the path I am choosing. Not because it guarantees anything, but because refusing to engage with the most powerful learning tool ever built guarantees something worse.

AlphaGo’s legacy

Ten years on, AlphaGo's fingerprints are everywhere. It has sent a signal across the world that AI should be taken seriously. Those who listened to that signal and reacted correctly are now leading the AI revolution we are living through.

However, AlphaGo and deep reinforcement learning did not lead to powerful and more general AI. Many expected it to become the dominant AI paradigm after 2016. Instead, the transformer architecture, introduced by researchers from Google in the 2017 “Attention Is All You Need” paper, set the course for a different revolution. The scaling of large language models demonstrated that self-supervised pre-training on massive text corpora could achieve broad capabilities across many domains simultaneously. That realisation led to the current generation of powerful and general models, such as OpenAI’s GPT family of models, Anthropic’s Claude or Google’s Gemini. Although AlphaGo alone did not lead to artificial general intelligence, a combination of foundational models with AlphaGo-style search and planning shows promising results in deep reasoning systems such as Gemini Deep Think.

DeepMind itself never stopped building on AlphaGo's foundations. AlphaStar reached Grandmaster level in StarCraft II, proving reinforcement learning could handle real-time strategy with imperfect information. AlphaChip used RL to design layouts for Google's TPU processors, generating in hours what had previously taken engineers months. AlphaTensor discovered faster matrix multiplication algorithms, improving on a result that had stood for over 50 years. AlphaGeometry 2 reached gold-medal level on International Mathematical Olympiad geometry problems. But the most celebrated successor was AlphaFold. DeepMind turned its attention to protein structure prediction—a 50-year grand challenge in biology—and in 2020, AlphaFold 2 essentially solved it. By 2024, it had been used by over two million researchers across 190 countries. That year, Demis Hassabis and John Jumper received the Nobel Prize in Chemistry—the first Nobel awarded for an AI-enabled scientific breakthrough.

But perhaps the most enduring legacy is not technical. It is the question that Lee Sedol has been living with since that match in Seoul—the question every professional will eventually face: what does mastery mean when a machine can do it better? In a recent interview with MIT Technology Review, he offered an answer: "It's every Go player's dream to play a masterpiece game—a game of technical brilliance, with no mistakes, fought to a razor's edge between evenly matched players. It's like a mirage. Maybe AI can help us play a masterpiece."

Thanks for reading. If you enjoyed this post, please click the ❤️ button or share it.

Humanity Redefined sheds light on the bleeding edge of technology and how advancements in AI, robotics, and biotech can usher in abundance, expand humanity's horizons, and redefine what it means to be human.

A big thank you to my paid subscribers, to my Patrons: whmr, Florian, dux, Eric, Preppikoma and Andrew, and to everyone who supports my work on Ko-Fi. Thank you for the support!

My DMs are open to all subscribers. Feel free to drop me a message, share feedback, or just say "hi!"