Nvidia wants to be the AI platform - Sync #563

Plus: GPT‑5.4 mini and nano; China approves first commercial BCI; an Australian uses AI to treat dog's cancer; Google introduces "vibe design"; SpaceX's problematic IPO; and more!

Hello and welcome to Sync #563!

Nvidia GTC conference took place this week, with Jensen Huang announcing a slew of new chips, AI systems, models, and more. We will take a closer look at what Nvidia announced and how it all builds a foundation for the company as a full-stack AI provider.

Elsewhere in AI, we have a range of news coming from OpenAI—including the release of GPT-5.4 mini and nano models, a report on cutting down “side projects” to focus on coding and enterprise, the acquisition of Astral, the delay of “adult mode”, and how OpenAI plans to catch up with Anthropic in coding. Beyond that, Nvidia is restarting production of chips for sale in China, Moonshot AI has reached an $18 billion valuation, Google has introduced “vibe design”, and a rogue AI led to a serious security incident at Meta.

Over in robotics, an Uber co-founder has launched a new robotics startup focused on industry and manufacturing, Amazon has acquired robotic doorstep delivery provider RIVR, and a humanoid robot danced uncontrollably in a restaurant following a malfunction.

In addition, this week’s issue of Sync features the first commercial Chinese BCI, how one Australian man used AI tools to cure his dog’s cancer, a deep dive into bottlenecks in scaling AI compute, SpaceX’s problematic IPO, an uploaded fruit fly brain controlling a virtual fly, and more!

Enjoy!

Nvidia wants to be the AI platform

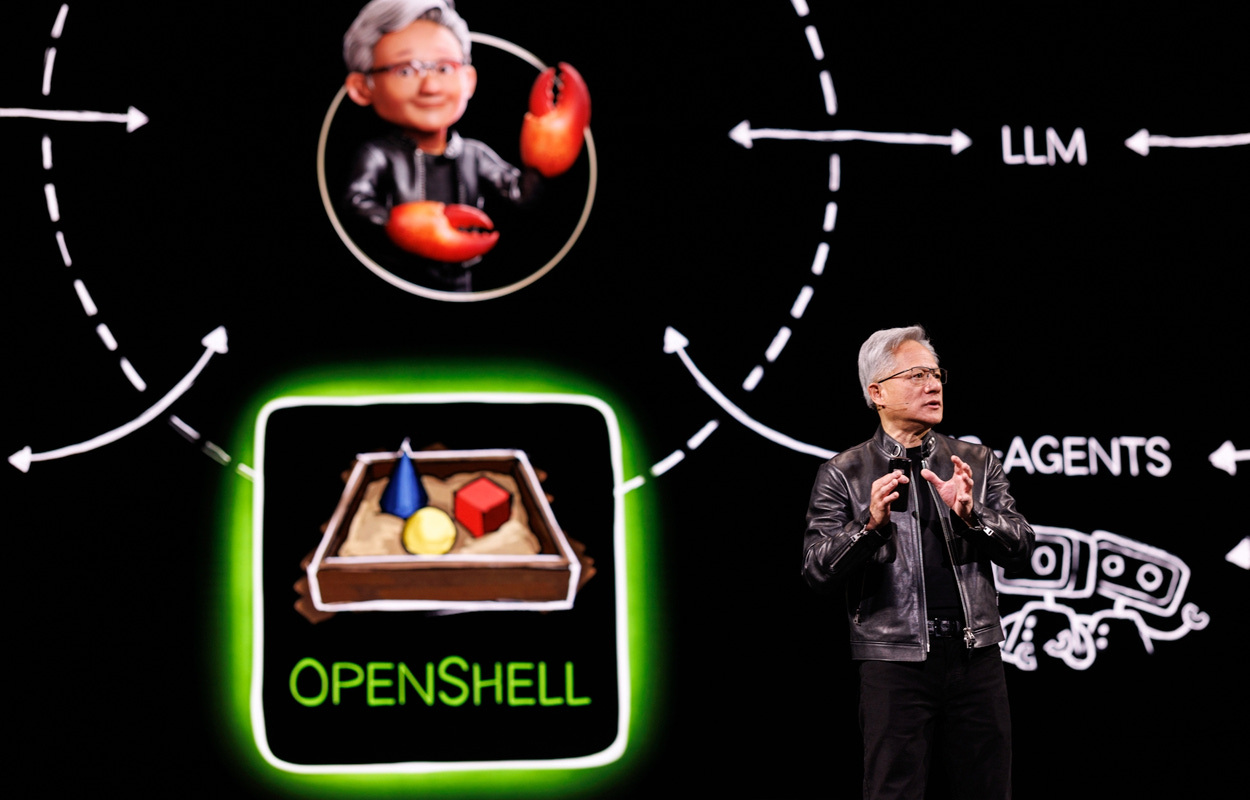

Nvidia’s GTC 2026 keynote was its most ambitious ever. Over nearly two and a half hours, Jensen Huang unveiled new chips and a new AI system, predicted a trillion dollars in demand through 2027, and made the company’s clearest bid yet to evolve from chipmaker to full-stack AI infrastructure architect. But by the end of the week, the stock had fallen 6.6%, shedding roughly $300 billion in market capitalisation.

Let’s look at what Nvidia announced, and why the markets reacted the way they did.

Vera Rubin and the inference bet

The centrepiece was the Vera Rubin platform—Nvidia’s successor to Blackwell and the most comprehensive product launch in its history.

Each Rubin GPU packs 336 billion transistors and 288 GB of HBM4. At rack scale, the NVL72 system—72 GPUs and 36 Vera CPUs in a single liquid-cooled enclosure—delivers 3.6 exaflops of FP4 inference. Nvidia claims 10 times the inference throughput per watt and one-tenth the cost per token versus Blackwell. A single rack produces 700 million tokens per second. At full scale, the Vera Rubin Pod stretches to 40 racks, 1.2 quadrillion transistors, and 60 exaflops.

Vera Rubin is expected to begin shipping in the second half of 2026. Microsoft has already powered on the first NVL72 system for validation in its labs.

But the most architecturally significant announcement may have been the Groq 3 LPU—the first chip from Nvidia’s roughly $20 billion Groq acquisition. Each LP30 chip contains 512 MB of on-chip SRAM, delivering 150 TB/s memory bandwidth—nearly 7 times that of the Rubin GPU's HBM4. The design pairs Rubin GPUs for compute-heavy prefill with Groq LPUs for latency-sensitive decode.

The inference push is not optional. As AI moves from training models to running them, inference is fast becoming the biggest workload and the biggest revenue opportunity. It is also where Nvidia faces the most competition. Custom chips from Google, Amazon, and others are built specifically for inference and can be cheaper to run for certain workloads. The Groq acquisition and the Vera Rubin architecture are Nvidia's bet that it can adopt a fundamentally different approach to computing and build it into the platform before rivals own the inference market.

Huang also previewed what comes next. The Vera Rubin Ultra, expected in 2027, introduces a new "Kyber" rack architecture housing 144 GPUs in a single NVLink domain. Beyond that, the Feynman architecture (slated for 2028) will feature a next-generation GPU, the Rosa CPU, the LP40 LPU, BlueField-5 networking, and both copper and co-packaged optics interconnects, all built on 1.6nm process technology.

Agentic AI as the platform play

If Vera Rubin was the hardware star, agentic AI was the strategic throughline. Huang devoted significant stage time to OpenClaw, the open-source agentic framework that exploded in popularity in recent weeks. He even called it the first AI operating system, possibly a bigger deal than Linux (at least if you look only at the GitHub Star History graph).

To capitalise on OpenClaw’s popularity, Nvidia announced NemoClaw—an enterprise-grade production stack layered atop OpenClaw with runtime sandboxing, a privacy router, and network guardrails. It is hardware-agnostic, open-source, and, just like OpenClaw, runs on everything, from a PC with GeForce RTX to a Mac Mini to a cloud instance.

The open model offensive

Nvidia launched six frontier model families at GTC, spanning nearly every domain where AI compute is heading:

Nemotron 3 for language, reasoning, and multimodal AI—Nvidia claims its Ultra variant will be “the best base model in the world”

Cosmos 3, pitched as the first world foundation model unifying synthetic generation, physical AI reasoning, and action simulation

Isaac GR00T for humanoid robotics

Alpamayo 1.5 for autonomous driving with steerable reasoning via natural language

BioNeMo for biology and drug discovery

Earth-2 for weather and climate forecasting

The Nemotron Coalition—a consortium including Mistral AI, Perplexity, Cursor, and LangChain—will jointly develop the next generation. This is part of Nvidia's broader push into open-weight AI, which includes plans to spend $26 billion over five years building open-weight models.

The logic is the same as it has always been. Models optimised for Nvidia hardware reinforce demand for that hardware. If the most capable open models run best on Nvidia GPUs, every startup, researcher, and enterprise deploying them becomes a customer. It is the CUDA playbook applied to models.

DLSS 5 and the gamer backlash

The only consumer product announced at GTC 2026 was DLSS 5, which Nvidia calls "3D-guided neural rendering." Previous DLSS versions were performance tools that made games run faster by upscaling lower-resolution frames. DLSS 5 is trying to make games look better. Its AI model participates directly in the rendering process, re-rendering lighting and materials in real time based on what it understands about the scene—skin, hair, fabric, environmental light, and reflections.

The response from the gaming community was not what Nvidia expected. Many gamers dismissed it as “AI slop” coming to gaming, comparing the generated images to an AI yassify filter. Game designers and artists pushed back, too, arguing that Nvidia was interfering too heavily with artistic intention.

Huang fired back directly, calling the critics “completely wrong.”

DLSS 5 ships in autumn 2026 as a driver update for existing RTX 50 Series GPUs. In the meantime, we have a new meme template to enjoy.

Other bets: from robots to robotaxis to orbit

Apart from new chips and AI models, Nvidia used GTC to show it is expanding into territories that already require, or soon will, enormous computing power—both in the cloud and at the edge, and soon maybe even in orbit.

The robotics showcase featured over 110 robots on the show floor, headlined by the GR00T N1.7 vision-language-action model for humanoid robots. During the keynote, a robotic Olaf from Frozen joined Huang on stage and demonstrated a simulation-first training pipeline built in Omniverse.

The autonomous driving push landed concrete partnerships. BYD, Hyundai, Nissan, and Geely joined existing partners Mercedes, Toyota, and GM—seven manufacturers producing roughly 18 million vehicles per year. Uber committed to deploying Nvidia-powered robotaxis across 28 cities by 2028.

Sovereign AI continued its rise as a revenue line. Governments that want to build national AI infrastructure on their own soil—the UK, France, Canada, South Korea, Singapore, and others—are buying Nvidia’s full-stack systems. Last fiscal year, sovereign AI revenue tripled to over $30 billion, nearly 14% of Nvidia’s total.

At the other extreme, Nvidia unveiled Space-1, a Vera Rubin computing module for orbital data centres delivering 25x more AI compute than H100 in space.

Why has the stock fallen?

By any measure, it was the broadest set of announcements in GTC's history. But it was not enough for Wall Street. Despite the most comprehensive product launch in Nvidia’s history, a trillion-dollar demand forecast, and 40 of 41 tracked analysts rating the stock a buy, Nvidia stock fell 6.6% over the conference week, resulting in the company shedding roughly $300 billion in market capitalisation. For the first time in nearly a year, the stock slipped below its 200-day moving average, breaching a technical floor it had held for 214 consecutive trading days.

The reasons were structural, not technical. Macro headwinds—including the escalating war in Iran and rising oil prices—rattled markets broadly. Additionally, investors remain sceptical about the sustainability of hyperscaler AI spending, particularly as more Big Tech companies raise debt to fund data centre buildouts. The trillion-dollar demand forecast was read as a timeline extension through 2027, not incremental acceleration.

Speaking with TechCrunch, Futurum CEO Daniel Newman captured the paradox neatly: the speed of AI innovation has itself become a source of uncertainty. The markets hate uncertainty—and right now, AI is moving too fast for investors to price confidently, even when the revenue keeps arriving.

The long game

GTC 2026 revealed a company that has moved decisively beyond just selling GPUs. Nvidia is building the full-stack operating layer for the AI economy—from silicon and networking through inference orchestration, agent frameworks, open models, and robotic deployment. By launching six model families, the Nemotron Coalition, and NemoClaw, Nvidia is positioning itself as the default platform for agentic AI. If Huang is right that every SaaS company will become an Agent-as-a-Service company, Nvidia stands to collect tolls at every level.

But the competition is not sleeping. AMD remains the most credible challenger—its MI400 series ships in the second half of 2026 with 432 GB of HBM4, significantly more memory than comparable Nvidia offerings, and its Helios rack-scale solution mirrors the Vera Rubin NVL72 architecture directly. AMD has already published a rebuttal to Nvidia’s GTC inference benchmarks, arguing that on equal footing, its chips deliver lower cost per token.

The hyperscalers are pushing harder still. Google’s TPU v7 now runs over 75% of Gemini computations internally. Microsoft has Maia 200. Amazon has Trainium. Each of them is building toward full vertical integration—controlling the stack from application to silicon. But none is ready to move all of its AI compute to custom chips, and each still relies on Nvidia's hardware.

The gap is real, but it is not permanent. Some analysts expect Nvidia to begin losing share from 2027 as in-house chip programmes scale, particularly in inference. For now, though, Nvidia’s CUDA ecosystem, its one-year architecture cadence, and its software moat create competitive barriers that no rival has yet breached. Whether the gap closes before Nvidia can entrench itself as the platform for the AI economy is the question to watch over the next 18 months.

If you enjoy this post, please click the ❤️ button and share it.

🦾 More than a human

China Approves First Brain Implant for Commercial Use

China has approved its first brain implant for commercial use, allowing Neuracle Technology to sell a device that helps partially paralysed patients regain some hand movement after spinal cord injuries. The approval marks a key step in China's state-backed push to compete with US firms like Neuralink, though Neuracle's implant is relatively limited—sitting outside the brain's outer membrane with fewer channels than more advanced rivals.

Brain Implants Let Paralyzed People Type Nearly as Fast as Smartphone Users

Scientists have helped two paralysed people type using only their thoughts, thanks to brain implants that read imagined finger movements on a familiar keyboard layout. After just 30 practice sentences, one participant reached 22 words per minute—close to typical smartphone typing speeds. The approach is easier and less tiring than existing methods like eye-tracking, and the team is now improving it for everyday long-term use.

🧠 Artificial Intelligence

OpenAI to Cut Back on Side Projects in Push to ‘Nail’ Core Business

OpenAI is scaling back its wide-ranging product efforts to focus on coding tools and business customers, the Wall Street Journal reports. The company's applications chief Fidji Simo said Anthropic's growing lead in the business market should be a "wake-up call," and that spreading resources too thinly across projects like the Sora video app or Atlas web browser had been a distraction. With both companies potentially heading for stock market listings, the move reflects a broader bet that serving developers and businesses is more important than chasing the consumer market.

Introducing GPT‑5.4 mini and nano

OpenAI has released GPT-5.4 mini and GPT-5.4 nano, two smaller, faster variants of its flagship GPT-5.4 model built for latency-sensitive, high-volume workloads such as coding assistants, agentic subagents, and computer-use applications. According to OpenAI, GPT-5.4 mini improves substantially over GPT-5 mini across coding, reasoning, multimodal understanding, and tool use while running more than 2x faster, and approaches GPT-5.4-level performance. GPT-5.4 mini is available now in the API, Codex, and ChatGPT, where Free and Go users can access it via the Thinking feature, and paid users get it as a rate-limit fallback. GPT-5.4 nano is API-only and aimed at lightweight tasks like classification, extraction, and ranking.

OpenAI’s Bid to Allow X-Rated Talk Is Freaking Out Its Own Advisers

OpenAI has delayed the launch of "adult mode," a planned feature enabling sexually explicit text conversations in ChatGPT, citing internal concerns and technical challenges, including an age-prediction system that was misclassifying around 12% of under-18 users as adults. The company's own well-being advisory council unanimously opposed the plan, warning of risks such as emotional dependence and inadequate protections for minors. Sam Altman has framed the feature as treating adults like adults and acknowledged that it could boost growth, but the decision highlights growing tension between commercial incentives and safety at the company.

Nvidia Says It Is Restarting Production of AI Chips for Sale in China

Nvidia is restarting production of its H200 chip for China after months of uncertainty. US and Chinese governments had gone back and forth on whether the sales could go ahead, but both sides now appear to have given the green light—though Nvidia must hand over 25% of the revenue to the US government. Jensen Huang said demand from Chinese buyers is picking up and orders are coming in, but the company hasn't actually made any money from the deal yet.

China AI Startup Moonshot Snags Funds at $18 Billion Valuation

Moonshot AI, the Chinese startup behind the Kimi chatbot, is looking to raise up to $1 billion at a valuation of around $18 billion—four times what it was worth just three months ago. The round reflects strong investor interest in Chinese AI firms challenging Western leaders like OpenAI and Anthropic.

Alibaba Consolidates AI Operations Under New Business Group

Alibaba has consolidated all its AI operations into a new unit called Alibaba Token Hub, bringing together its Tongyi lab, Qwen AI assistant, and other AI divisions. The move reflects the company's belief that AI agents will handle an increasing share of digital work, and comes shortly after the departure of Qwen's technical lead.

Introducing “vibe design” with Stitch

Google has updated Stitch, an experimental AI tool that lets users create UI designs by describing them in plain language, using an approach it calls "vibe design." Powered by Gemini, it produces editable design files and working front-end code, aiming to collapse the usual back-and-forth between designers and developers into one faster step. The tool is still experimental, but Google's aim is to collapse the traditionally slow back-and-forth between designers and developers into a single, much faster step.

OpenAI to acquire Astral

OpenAI has announced its intention to acquire Astral, the company behind uv, a popular Python package and project manager. After the deal closes, the Astral team will join OpenAI to work on Codex, the company's AI coding agent. This is yet another acquisition of an open source team by OpenAI (the most notable was the recent hiring of Peter Steinberger, the creator of OpenClaw), and mirrors Anthropic’s acquisition of Bun, a popular JavaScript runtime. For a deeper analysis of the deal's implications for the Python ecosystem, Simon Willison's post is worth reading.

Introducing Perplexity Health

Perplexity joins OpenAI and Anthropic, and launches its own AI tool for health. Called Perplexity Health, it connects to users' medical records, lab results, and wearable devices to answer health questions based on their personal data. According to Perplexity, the new tool draws answers from peer-reviewed medical sources rather than general web content. The service is launching for paid US subscribers first, with a physician advisory board overseeing its development.

Introducing the Machine Payments Protocol

Stripe and Tempo have launched the Machine Payments Protocol (MPP), an open standard that lets AI agents pay for things on the web automatically. It works with regular currencies and stablecoins, and businesses can set it up with just a few lines of code using Stripe's existing tools. Early uses include agents paying for browser access, sending physical mail, and even ordering sandwiches—all without human involvement. It's part of Stripe's bigger bet that AI agents will become major customers on the internet.

▶️ Dylan Patel — Deep Dive on the 3 Big Bottlenecks to Scaling AI Compute (2:31:03)

Dwarkesh Patel sits down with Dylan Patel, founder of SemiAnalysis, to discuss the bottlenecks in scaling AI compute. A key theme is the inertia of the semiconductor supply chain. ASML’s EUV lithography machines, produced in tiny quantities with long lead times, are the ultimate bottleneck capping global chip production for the rest of the decade, while a worsening memory crunch is driving up DRAM prices and squeezing out consumer electronics. The AI labs know what they need, but each layer of the supply chain beneath them is building to a more conservative estimate, and the gap keeps widening. I highly recommend listening to this conversation if you are interested in the economics side of the semiconductor industry during the AI boom.

Introducing MAI-Image-2: for limitless creativity

Microsoft has launched MAI-Image-2, a new image generation model from its AI team that it says ranks third worldwide on the Arena.ai leaderboard. The model is designed to produce realistic photos, handle text within images, and create detailed scenes. It is available now in the MAI Playground and is rolling out to Copilot and Bing Image Creator, with API access for select business customers and wider developer access coming soon.

Mistral Forge

Forge is a platform launched by Mistral that enables businesses to train their own AI models using internal data such as company documents, code, and policies. Its main advantage is that organisations retain full control over both their models and their data. Instead of depending on generic AI systems, companies can create models tailored to their specific processes and terminology, resulting in more accurate and reliable AI agents for real-world applications.

Introducing Mistral Small 4

Mistral has launched Mistral Small 4, a free, open-source AI model that combines text chat, image understanding, coding, and complex reasoning into one package—replacing several of its earlier specialised models. Mistral says it matches or beats larger competitors on key benchmarks while producing shorter, cheaper-to-run responses. The model is available now through Mistral's own API, Hugging Face, and Nvidia’s platform.

China is mobilizing thousands of one-person AI startups

Chinese cities, such as Suzhou, Shanghai, and Wuhan, are competing to attract solo founders who use AI tools to build products without needing employees or investors. Local governments are offering free office space, cheap computing power, and loans, often filling empty buildings in the process. The effort mirrors how China has previously used state backing to rapidly grow industries like electric vehicles. Most of these tiny startups probably won't survive, but the subsidies are giving laid-off tech workers a reason to experiment with AI.

Inside OpenAI’s Race to Catch Up to Claude Code

WIRED spoke with over 30 people at OpenAI, including its top leadership, to learn how the company lost its lead in AI coding tools. After ChatGPT took off in late 2022, OpenAI shelved its dedicated coding team and shifted focus to consumer products, leaving Anthropic to fill the gap with Claude Code—now a $2.5 billion business, more than double what OpenAI’s Codex brings in. OpenAI has been playing catch-up ever since, assembling new teams and trying to buy its way back in.

A rogue AI led to a serious security incident at Meta

An internal AI bot at Meta—described as similar to OpenClaw—posted bad technical advice on a company forum without permission, and when another employee followed that advice, it caused a serious security incident that briefly exposed sensitive data to staff who shouldn’t have seen it. Meta says no user data was misused and blames the engineer for not double-checking the AI’s suggestion. It’s the second time in weeks that an AI agent has caused problems at Meta, highlighting the risks of giving these tools too much independence.

Pentagon Moving to Replace Anthropic Amid AI Feud, Official Says

The Pentagon is replacing Anthropic's AI tools after declaring the company a supply-chain risk, following a dispute over Anthropic's demand for safeguards against mass surveillance and autonomous weapons. According to a Bloomberg interview with the Pentagon's chief digital and AI officer, engineering work on replacement systems is already underway, with OpenAI, xAI and Google all stepping in to fill the gap.

ByteDance reportedly pauses global launch of its Seedance 2.0 video generator

ByteDance has put its Seedance 2.0 AI video tool on hold outside China after Hollywood studios threatened legal action, according to a report from The Information. The tool went viral in February when users created fake clips of real actors, prompting companies like Disney to accuse ByteDance of stealing their intellectual property. The global launch, originally planned for mid-March, is now delayed while the company tries to sort out the legal issues.

Cerebras is coming to AWS

Cerebras and AWS are partnering to deploy Cerebras CS-3 systems in AWS data centres and made them available through AWS Bedrock. The two companies are pairing their respective chips—AWS Trainium for processing queries and Cerebras's wafer-scale engine for generating responses—in a combined setup expected to deliver five times more high-speed token capacity.

Anthropic invests $100 million into the Claude Partner Network

Anthropic has launched the Claude Partner Network, a programme backed by an initial $100 million investment for 2026 to help consultancies, professional services firms, and specialist AI agencies support enterprise adoption of Claude. Partners get training, technical support, marketing help, and access to a new professional certification.

▶️ Newest Gen AI Servers Are Built Like This (20:30)

Patrick from ServeTheHome gets his hands on Nvidia B300 servers and compares them to the previous B200 generation. This video provides a clear overview of the hardware used to serve modern AI models, how these systems operate and connect to one another, how they are powered and cooled, and even how they look like inside.

🤖 Robotics

Uber co-founder Kalanick launches Atoms in specialized robotics push

Travis Kalanick, Uber's co-founder and former CEO, has launched a new startup called Atoms that builds robots for specific jobs in mining, transport, and food production. Rather than trying to create general-purpose humanoid robots, the company focuses on machines designed to perform specific tasks well—an approach Kalanick believes is more practical and profitable.

US Army announces contract with Anduril worth up to $20B

The US Army has awarded Anduril a 10-year deal worth up to $20 billion. The contract bundles over 120 separate purchasing agreements into one, covering Anduril's hardware, software, and services as the Pentagon looks to modernise how it buys technology. Anduril, which made around $2 billion in revenue last year, is also reportedly raising funds at a $60 billion valuation.

Amazon acquires robotic doorstep delivery provider RIVR

Amazon has bought RIVR, a robotics company that makes four-legged wheeled robots for delivering packages to people's doors. RIVR grew out of a ETH Zurich's robotics lab and had already received backing from Jeff Bezos in a 2024 funding round. Unlike other delivery robots, RIVR's robots can handle stairs and rough ground, which could give Amazon a fresh approach after it scrapped its own delivery robot project in 2022.

Employees had to restrain a dancing humanoid robot after it went wild at a California restaurant

A dancing robot at restaurant in California went haywire near a dining table, smashing plates and scattering dishware while staff scrambled to stop it. The restaurant said the robot wasn't malfunctioning—it had simply been moved too close to a table at a customer's request. The incident raises obvious safety concerns and suggests that humanoid robots with swinging arms might not be the best fit for crowded spaces just yet.

Noble Machines exits stealth with Moby humanoid

Another humanoid robotics company joins the party! Noble Machines has emerged from stealth and revealed that it deployed its Moby humanoid robot at a Fortune Global 500 customer within 18 months of launch. Founded by ex-Apple, SpaceX, NASA, and Caltech engineers, the company emphasises rapid language-based learning and whole-body AI control, claiming its robots can acquire new skills in hours. Moby is aimed at hazardous industrial tasks across manufacturing, logistics, and construction, with a next-generation model on the way.

Gecko Robotics lands the largest U.S. Navy robotics deal yet

Gecko Robotics has signed a five-year deal with the US Navy worth up to $71 million—the Navy's largest robotics contract to date—to deploy inspection robots across its fleet, starting with 18 Pacific Fleet ships. The robots will create digital twins of each vessel to enable predictive maintenance, aiming to boost ship readiness from around 60% to 80% by 2027.

🧬 Biotechnology

An Australian tech entrepreneur used AI to help create the first-ever bespoke cancer vaccine for a dog to treat his beloved pet Rosie

An Australian tech entrepreneur used ChatGPT and AlphaFold to find a way to treat his dog Rosie's cancer after standard treatments failed. With help from scientists, he had Rosie's DNA analysed and a custom mRNA vaccine was created—believed to be the first of its kind for a dog. Most of Rosie's tumours have since shrunk, and while she isn't cured, her health and energy have bounced back. Researchers say the case shows how AI could make personalised cancer treatments faster and more widely available, for animals and eventually humans.

Digital Twin of a Cell Tracks Its Entire Life Cycle Down to the Nanoscale

Scientists have built a detailed digital twin of a living bacterium, simulating nearly all its molecules in 3D as it grows and divides. Unlike simpler models that treat cells as a uniform molecular soup, this one tracks where each molecule sits, which turns out to be crucial for accurately capturing cell division. The simulation matched real experimental results closely, though it took up to six days on a supercomputer to model just 105 minutes of the bacterium’s life. The work could help speed up drug discovery and deepen our understanding of how cells function at the most basic level.

How to Design Antibodies

If you ever wondered how to design an antibody, then this step-by-step guide from Asimov Press is for you. AI tools like BoltzGen can now design antibody candidates computationally, replacing a process that once required screening billions of molecules in the lab. The guide walks through the full workflow—from choosing a protein target to testing designs in a wet lab—using open-source tools and web platforms accessible to non-specialists, with a minimum cost of around $4,000.

💡Tangents

Patrick Boyle analyses in this video the SpaceX–xAI merger and the upcoming SpaceX IPO. He argues the deal is mainly a financial manoeuvre to bundle a loss-making AI company with a capital-hungry space business before going public, noting that SpaceX itself burns through enormous capital and has never paid a dividend. Boyle highlights serious governance concerns given Musk's controlling role on both sides of the deal, and suggests the merger lets xAI avoid an embarrassing down round while capitalising on the current AI hype before investors look too closely.

The First Multi-Behavior Brain Upload

Eon Systems has shown a complete simulation of a fruit fly brain—over 125,000 neurons and 50 million synaptic connections—controlling a virtual fly body and producing multiple natural behaviours. This is the first of this kind, as previous projects either simulated brains without bodies or used AI shortcuts rather than actual brain wiring. The company plans to attempt the same with the mouse and eventually human brains.

Future AI chips could be built on glass

In pursuit of greater performance and energy efficiency, the semiconductor industry is turning to glass as a foundation for advanced chip packaging. Glass handles heat far better than the organic materials used for decades, allowing engineers to fit more chips into smaller spaces while using less power. South Korean company Absolics plans to begin commercial production this year, Intel has built working prototypes, and major firms like Samsung are racing to catch up.

Thanks for reading. If you enjoyed this post, please click the ❤️ button and share it!

Humanity Redefined sheds light on the bleeding edge of technology and how advancements in AI, robotics, and biotech can usher in abundance, expand humanity's horizons, and redefine what it means to be human.

A big thank you to my paid subscribers, to my Patrons: whmr, Florian, dux, Eric, Preppikoma and Andrew, and to everyone who supports my work on Ko-Fi. Thank you for the support!

My DMs are open to all subscribers. Feel free to drop me a message, share feedback, or just say "hi!"